The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTClosing Thoughts

It took a while to get here, but if the proof is in the eating of the pudding, FreeSync tastes just as good as G-SYNC when it comes to adaptive refresh rates. Within the supported refresh rate range, I found nothing to complain about. Perhaps more importantly, while you’re not getting a “free” monitor upgrade, the current prices of the FreeSync displays are very close to what you’d pay for an equivalent display that doesn’t have adaptive sync. That’s great news, and with the major scaler manufacturers on board with adaptive sync the price disparity should only shrink over time.

The short summary is that FreeSync works just as you’d expect, and at least in our limited testing so far there have been no problems. Which isn’t to say that FreeSync will work with every possible AMD setup right now. As noted last month, the initial FreeSync driver that AMD provided (Catalyst 15.3 Beta 1) only allows FreeSync to work with single GPU configurations. Another driver should be coming next month that will support FreeSync with CrossFire setups.

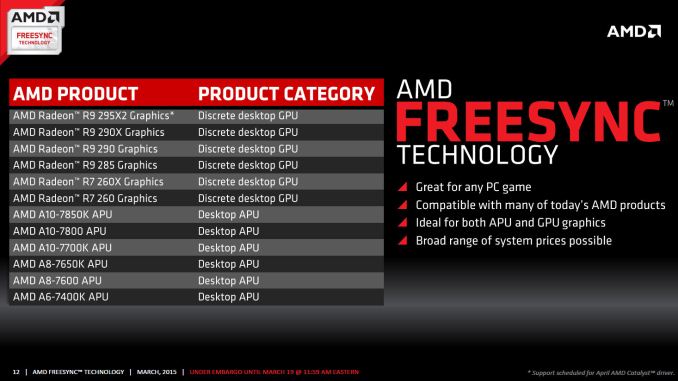

Besides needing a driver and FreeSync display, you also need a GPU that uses AMD’s GCN 1.1 or later architecture. The list at present consists of the R7 260/260X, R9 285, R9 290/290X/295X2 discrete GPUs, as well as the Kaveri APUs – A6-7400K, A8-7600/7650K, and A10-7700K/7800/7850K. First generation GCN 1.0 cards (HD 7950/7970 or R9 280/280X and similar) are not supported.

All is not sunshine and roses, however. Part of the problem with reviewing something like FreeSync is that we're inherently tied to the hardware we receive, in this case the LG 34UM67 display. Armed with an R9 290X and running at the native resolution, the vast majority of games will run at 48FPS or above even at maximum detail settings, though of course there are exceptions. This means they look and feel smooth. But what happens with more demanding games or with lower performance GPUs? If you're running without VSYNC, you'd get tearing below 48FPS, while with VSYNC you'd get stuttering.

Neither is ideal, but how much this impacts your experience will depend on the game and individual. G-SYNC handles dropping below the minimum FPS more gracefully than FreeSync, though if you're routinely falling below the minimum FreeSync refresh rate we'd argue that you should lower the settings. Mostly what you get with FreeSync/G-SYNC is the ability to have smooth gaming at 40-60 FPS and not just 60+ FPS.

Other sites are reporting ghosting on FreeSync displays, but that's not inherent to the technology. Rather, it's a display specific problem (just as the amount of ghosting on normal LCDs is display specific). Using higher quality panels and hardware designed to reduce/eliminate ghosting is the solution. The FreeSync displays so far appear to not have the same level of anti-ghosting as the currently available G-SYNC panels, which is unfortunate if true. (Note that we've only looked at the LG 34UM67, so we can't report on all the FreeSync displays.) Again, ghosting shouldn't be a FreeSync issue so much as a panel/scaler/firmware problem, so we'll hold off on further commentary until we get to the monitor reviews.

One final topic to address is something that has become more noticeable to me over the past few months. While G-SYNC/FreeSync can make a big difference when frame rates are in the 40~75 FPS range, as you go beyond that point the benefits are a lot less clear. Take the 144Hz ASUS ROG Swift as an example. Even with G-SYNC disabled, the 144Hz refresh rate makes tearing rather difficult to spot, at least in my experience. Considering pixel response times for LCDs are not instantaneous and combine that with the way our human eyes and brain process the world and for all the hype I still think having high refresh rates with VSYNC disabled gets you 98% of the way to the goal of smooth gaming with no noticeable visual artifacts (at least for those of us without superhuman eyesight).

Overall, I’m impressed with what AMD has delivered so far with FreeSync. AMD gamers in particular will want to keep an eye on the new and upcoming FreeSync displays. They may not be the “must have” upgrade right now, but if you’re in the market and the price premium is less than $50, why not get FreeSync? On the other hand, for NVIDIA users things just got more complicated. Assuming you haven’t already jumped on the G-SYNC train, there’s now this question of whether or not NVIDIA will support non-G-SYNC displays that implement DisplayPort’s Adaptive Sync technology. I have little doubt that NVIDIA can support FreeSync panels, but whether they will support them is far less certain. Given the current price premium on G-SYNC displays, it’s probably a good time to sit back and wait a few months to see how things develop.

There is one G-SYNC display that I’m still waiting to see, however: Acer’s 27” 1440p144 IPS (AHVA) XB270HU. It was teased at CES and it could very well be the holy grail of displays. It’s scheduled to launch next month, and official pricing is $799 (with some pre-orders now online at higher prices). We might see a FreeSync variant of the XB270HU as well in the coming months, if not from Acer than likely from some other manufacturer. For those that work with images and movies as well as playing games, IPS/AHVA displays with G-SYNC or FreeSync support are definitely needed.

Wrapping up, if you haven’t upgraded your display in a while, now is a good time to take stock of the various options. IPS and other wide viewing angle displays have come down quite a bit in pricing, and there are overclockable 27” and 30” IPS displays that don’t cost much at all. Unfortunately, if you want a guaranteed high refresh rate, there’s a good chance you’re going to have to settle for TN. The new UltraWide LG displays with 75Hz IPS panels at least deliver a moderate improvement though, and they now come with FreeSync as an added bonus.

Considering a good display can last 5+ years, making a larger investment isn’t a bad idea, but by the same token rushing into a new display isn’t advisable either as you don't want to end up stuck with a "lemon" or a dead technology. Take some time, read the reviews, and then find the display that you will be happy to use for the next half decade. At least by then we should have a better idea of which display technologies will stick around.

350 Comments

View All Comments

Keysisitchy - Thursday, March 19, 2015 - link

RIP GsyncThe 'gsync module's was all smoke and mirrors and worthless.

RIP gsync. Freesync is here

imaheadcase - Thursday, March 19, 2015 - link

Not sure why people are bashing Gsync, it still is fantastic. So it puts a little more on price on hardware..you are still forgetting the main drive why gsync is better. It still works on nvidia hardware and freesync STILL requires a driver on nvidia hardware which they have already stated won't happen.If you noticed, the displayed they are announced don't even care about the specs freesync could do. They are just the same if worse than Gsync. Notice the Gsynce IPS 144Hz monitors are coming out this month..

imaheadcase - Thursday, March 19, 2015 - link

To add to the above, freesync is not better than Gsync because its "open". Costs still trickle down somehow..and that cost is you having to stick with AMD hardware when you buy a new monitor. So the openness about is is really it is closed tech...just like Gsync.iniudan - Thursday, March 19, 2015 - link

Nvidia will someday to have to provide freesync to comply with spec of more recent displayport version. Right now they are just keeping older 1.2 version on their gpu, simply as to not have to adopt it.dragonsqrrl - Thursday, March 19, 2015 - link

No current or upcoming DP spec (including DP 1.3) requires adaptive sync.eddman - Thursday, March 19, 2015 - link

Do you mean to say that it's available for 1.3 but optional, or that 1.3 doesn't support adaptivesync at all?It does support it in 1.3, according to their FAQ, but doesn't say it's mandatory or not.

http://www.displayport.org/faq/#DisplayPort 1.3 FAQs

DanNeely - Thursday, March 19, 2015 - link

It's optional. Outside of gaming it doesn't have any real value; so forcing mass market displays to use a marginally more complex controller/more capable panel doesn't add any value.mutantmagnet - Friday, March 20, 2015 - link

It has value for movies. The screen tearing that can occur would be nice to remove.D. Lister - Friday, March 20, 2015 - link

Movies can't have screen tearing, because the GPU is just decoding them not actually generating the visuals like in games.ozzuneoj86 - Friday, March 20, 2015 - link

That is definitely not true. Tearing happens between the graphics card and the display, it doesn't matter what the source is. If you watch a movie at 24fps on a screen that is running at 60Hz connected to a PC, there is a chance for tearing.Tearing is just less common on movies because the frame rates are usually much lower than the refresh rate of the display, so its less likely to be visible.