The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTFreeSync vs. G-SYNC Performance

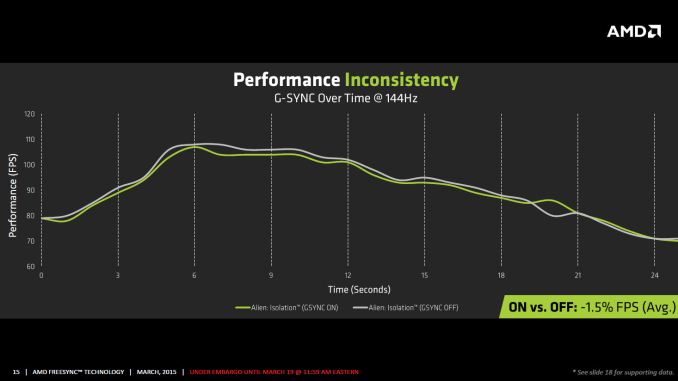

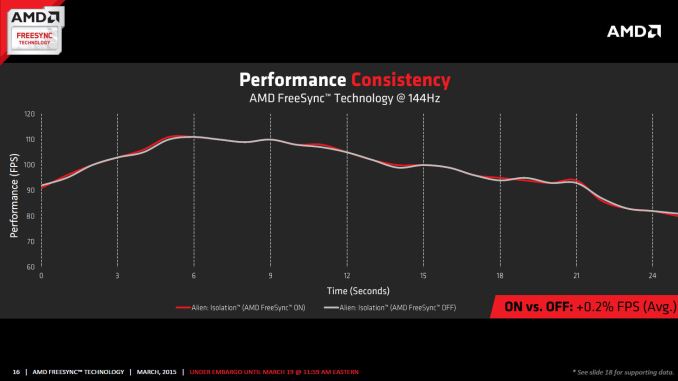

One item that piqued our interest during AMD’s presentation was a claim that there’s a performance hit with G-SYNC but none with FreeSync. NVIDIA has said as much in the past, though they also noted at the time that they were "working on eliminating the polling entirely" so things may have changed, but even so the difference was generally quite small – less than 3%, or basically not something you would notice without capturing frame rates. AMD did some testing however and presented the following two slides:

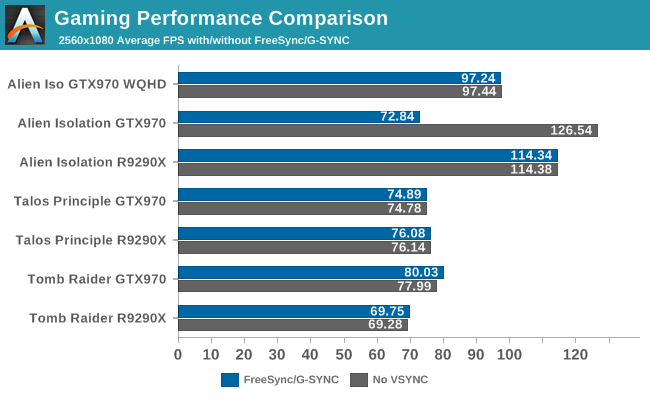

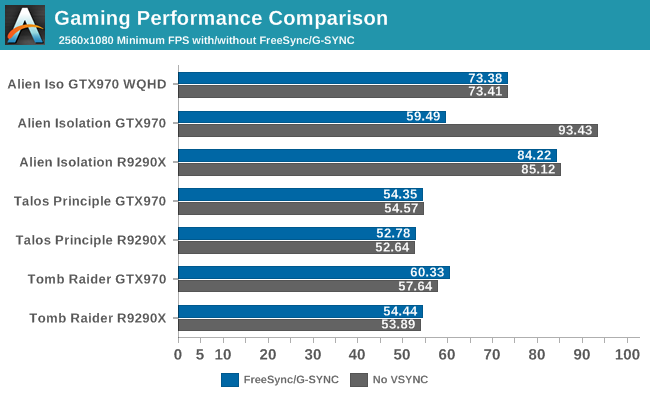

It’s probably safe to say that AMD is splitting hairs when they show a 1.5% performance drop in one specific scenario compared to a 0.2% performance gain, but we wanted to see if we could corroborate their findings. Having tested plenty of games, we already know that most games – even those with built-in benchmarks that tend to be very consistent – will have minor differences between benchmark runs. So we picked three games with deterministic benchmarks and ran with and without G-SYNC/FreeSync three times. The games we selected are Alien Isolation, The Talos Principle, and Tomb Raider. Here are the average and minimum frame rates from three runs:

Except for a glitch with testing Alien Isolation using a custom resolution, our results basically don’t show much of a difference between enabling/disabling G-SYNC/FreeSync – and that’s what we want to see. While NVIDIA showed a performance drop with Alien Isolation using G-SYNC, we weren’t able to reproduce that in our testing; in fact, we even showed a measurable 2.5% performance increase with G-SYNC and Tomb Raider. But again let’s be clear: 2.5% is not something you’ll notice in practice. FreeSync meanwhile shows results that are well within the margin of error.

What about that custom resolution problem on G-SYNC? We used the ASUS ROG Swift with the GTX 970, and we thought it might be useful to run the same resolution as the LG 34UM67 (2560x1080). Unfortunately, that didn’t work so well with Alien Isolation – the frame rates plummeted with G-SYNC enabled for some reason. Tomb Raider had a similar issue at first, but when we created additional custom resolutions with multiple refresh rates (60/85/100/120/144 Hz) the problem went away; we couldn't ever get Alien Isolation to run well with G-SYNC using our custome resolution, however. We’ve notified NVIDIA of the glitch, but note that when we tested Alien Isolation at the native WQHD setting the performance was virtually identical so this only seems to affect performance with custom resolutions and it is also game specific.

For those interested in a more detailed graph of the frame rates of the three runs (six total per game and setting, three with and three without G-SYNC/FreeSync), we’ve created a gallery of the frame rates over time. There’s so much overlap that mostly the top line is visible, but that just proves the point: there’s little difference other than the usual minor variations between benchmark runs. And in one of the games, Tomb Raider, even using the same settings shows a fair amount of variation between runs, though the average FPS is pretty consistent.

350 Comments

View All Comments

Keysisitchy - Thursday, March 19, 2015 - link

RIP GsyncThe 'gsync module's was all smoke and mirrors and worthless.

RIP gsync. Freesync is here

imaheadcase - Thursday, March 19, 2015 - link

Not sure why people are bashing Gsync, it still is fantastic. So it puts a little more on price on hardware..you are still forgetting the main drive why gsync is better. It still works on nvidia hardware and freesync STILL requires a driver on nvidia hardware which they have already stated won't happen.If you noticed, the displayed they are announced don't even care about the specs freesync could do. They are just the same if worse than Gsync. Notice the Gsynce IPS 144Hz monitors are coming out this month..

imaheadcase - Thursday, March 19, 2015 - link

To add to the above, freesync is not better than Gsync because its "open". Costs still trickle down somehow..and that cost is you having to stick with AMD hardware when you buy a new monitor. So the openness about is is really it is closed tech...just like Gsync.iniudan - Thursday, March 19, 2015 - link

Nvidia will someday to have to provide freesync to comply with spec of more recent displayport version. Right now they are just keeping older 1.2 version on their gpu, simply as to not have to adopt it.dragonsqrrl - Thursday, March 19, 2015 - link

No current or upcoming DP spec (including DP 1.3) requires adaptive sync.eddman - Thursday, March 19, 2015 - link

Do you mean to say that it's available for 1.3 but optional, or that 1.3 doesn't support adaptivesync at all?It does support it in 1.3, according to their FAQ, but doesn't say it's mandatory or not.

http://www.displayport.org/faq/#DisplayPort 1.3 FAQs

DanNeely - Thursday, March 19, 2015 - link

It's optional. Outside of gaming it doesn't have any real value; so forcing mass market displays to use a marginally more complex controller/more capable panel doesn't add any value.mutantmagnet - Friday, March 20, 2015 - link

It has value for movies. The screen tearing that can occur would be nice to remove.D. Lister - Friday, March 20, 2015 - link

Movies can't have screen tearing, because the GPU is just decoding them not actually generating the visuals like in games.ozzuneoj86 - Friday, March 20, 2015 - link

That is definitely not true. Tearing happens between the graphics card and the display, it doesn't matter what the source is. If you watch a movie at 24fps on a screen that is running at 60Hz connected to a PC, there is a chance for tearing.Tearing is just less common on movies because the frame rates are usually much lower than the refresh rate of the display, so its less likely to be visible.