The Qualcomm Snapdragon X Architecture Deep Dive: Getting To Know Oryon and Adreno X1

by Ryan Smith on June 13, 2024 9:00 AM ESTAdreno X1 GPU Architecture: A More Familiar Face

Shifting gears, let’s talk about the Snapdragon X SoC’s GPU architecture: Adreno X.

Unlike the Oryon CPU cores, Adreno X1 is not a wholly new hardware architecture. In fact, with 3 generations of 8cx SoCs before it, it’s not even new to Windows. Still, Qualcomm has been notoriously tight-lipped about their GPU architectures over the years, so the GPU architecture may as well be new to AnandTech readers. Suffice it to say, I’ve been trying to get a detailed disclosure from Qualcomm for over a decade at this point, and with Snapdragon X, they’re finally delivering.

At a high level, the Adreno X1 GPU architecture is the latest revision of Qualcomm’s ongoing series of Adreno architectures, with the X1 representing the 7th generation. Adreno itself is based on an acquisition from ATI over 15 years ago (Adreno is an anagram of Radeon), and over the years Qualcomm’s Adreno architecture has more often than not been the GPU to beat in the Android space.

Things are a bit different in the Windows space, of course, as discrete GPUs push integrated GPUs well off to the side for workloads that absolutely need high GPU performance. And because game development has never become fully divorced from GPU architectures/drivers, Qualcomm’s miniscule presence in the Windows market over the years has led to them going often overlooked by game developers. Still, Qualcomm isn’t new to the Windows game, which gives them a leg-up as they try to move into taking a larger share of the Windows market.

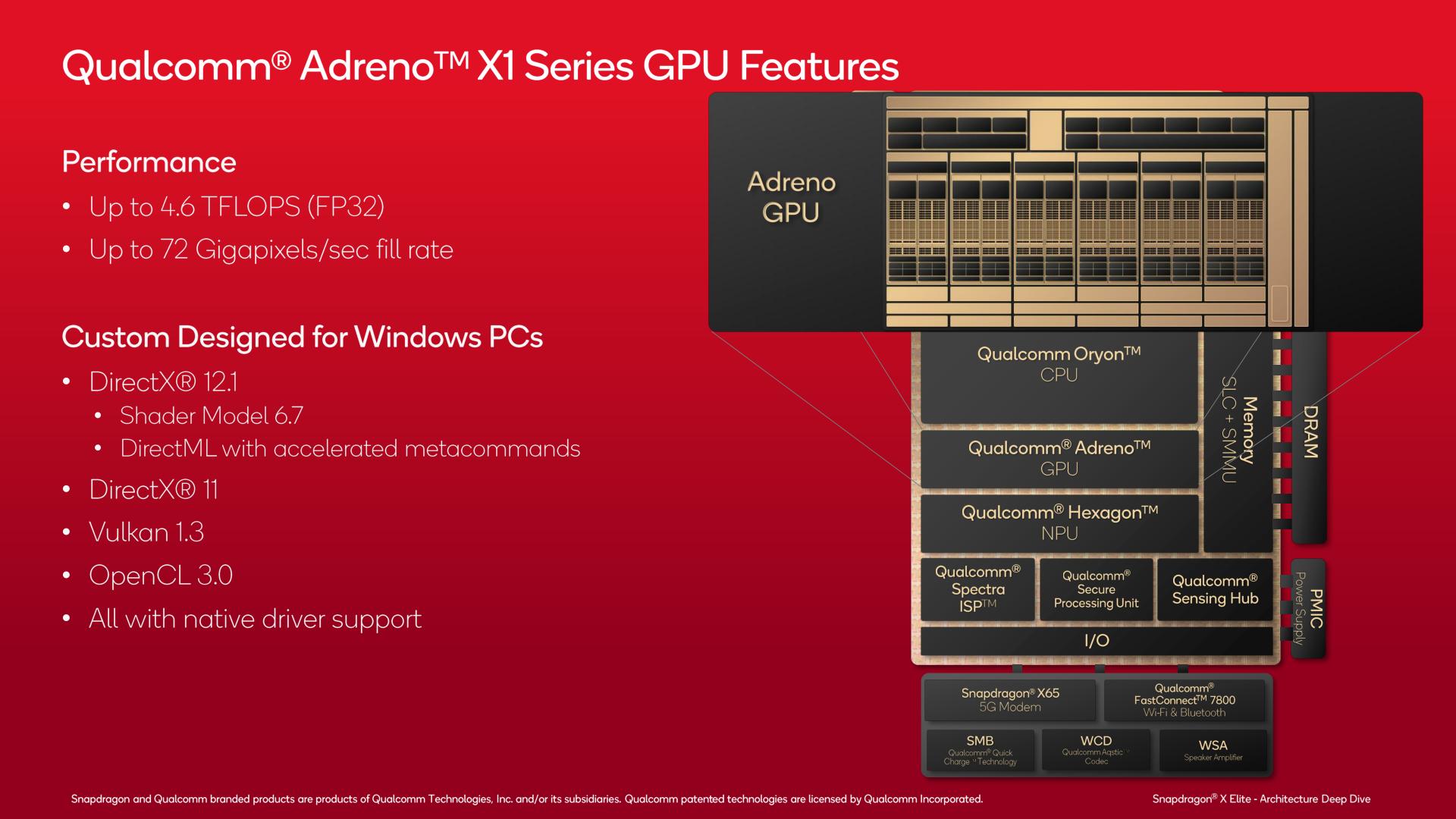

From a feature standpoint, the Adreno X1 GPU architecture is unfortunately a bit dated compared to contemporary x86 SoCs. While the architecture does support ray tracing, the chip isn’t able to support the full DirectX 12 Ultimate (feature level 12_2) feature set. And that means it must report itself to DirectX applications as a feature level 12_1 GPU, which means most games will restrict themselves to those features.

That said, Adreno X1 does support some advanced features, which are already being actively used on Android where DirectX’s feature levels do not exist. As previously noted, there is ray tracing support, and this is exposed on Windows applications via the Vulkan API and its ray query calls. Given how limited Vulkan use is on Windows, Qualcomm understandably doesn’t go into the subject in too much depth; but it sounds like Qualcomm’s implementation is a level 2 design with hardware ray testing but no hardware BVH processing, which would make it similar in scope to AMD’s RDNA2 architecture.

| DirectX 12 Feature Levels | |||||

| 12_2 (DX12 Ult.) |

12_1 | 12_0 | |||

| Ray Tracing (DXR 1.1) |

Yes | No | No | ||

| Variable Rate Shading (Tier 2) |

Yes | No | No | ||

| Mesh Shaders | Yes | No | No | ||

| Sampler Feedback | Yes | No | No | ||

| Conservative Rasterization | Yes | Yes | No | ||

| Raster Order Views | Yes | Yes | No | ||

| Tiled Resources (Tier 2) |

Yes | Yes | Yes | ||

| Bindless Resources (Tier 2) |

Yes | Yes | Yes | ||

| Typed UAV Load | Yes | Yes | Yes | ||

Otherwise, variable rate shading (VRS) tier 2 is supported as well, which is critical for optimizing shader workloads on mobile GPUs. So it appears the missing features holding the X1 back from DirectX 12 Ultimate support are mesh shaders and sampler feedback, which are admittedly some pretty big hardware changes.

In terms of API support, as previously noted, the Adreno X1 GPU supports DirectX and Vulkan. Qualcomm offers native drivers/paths for DirectX 12 and DirectX 11, Vulkan 1.3, and OpenCL 3.0. The only notable exception here is DirectX 9 support, which like fellow SoC vendor Intel, is implemented using D3D9on12, Microsoft's mapping layer for translating DX9 commands to DX12. DX9 games are far and few these days (the API was supplanted by DX10/11 over 15 years ago), but since this is Windows, backwards compatibility is an ongoing expectation.

Conversely, on the compute front Microsoft’s new DirectML API for low-level GPU access for machine learning is supported. Qualcomm even has optimized metacommands written for the GPU, so that software tapping into DirectML can run more efficiently without knowing anything else about the architecture.

Adreno X1 GPU Architecture In Depth

High-level functionality aside, let’s take a look at the low-level architecture.

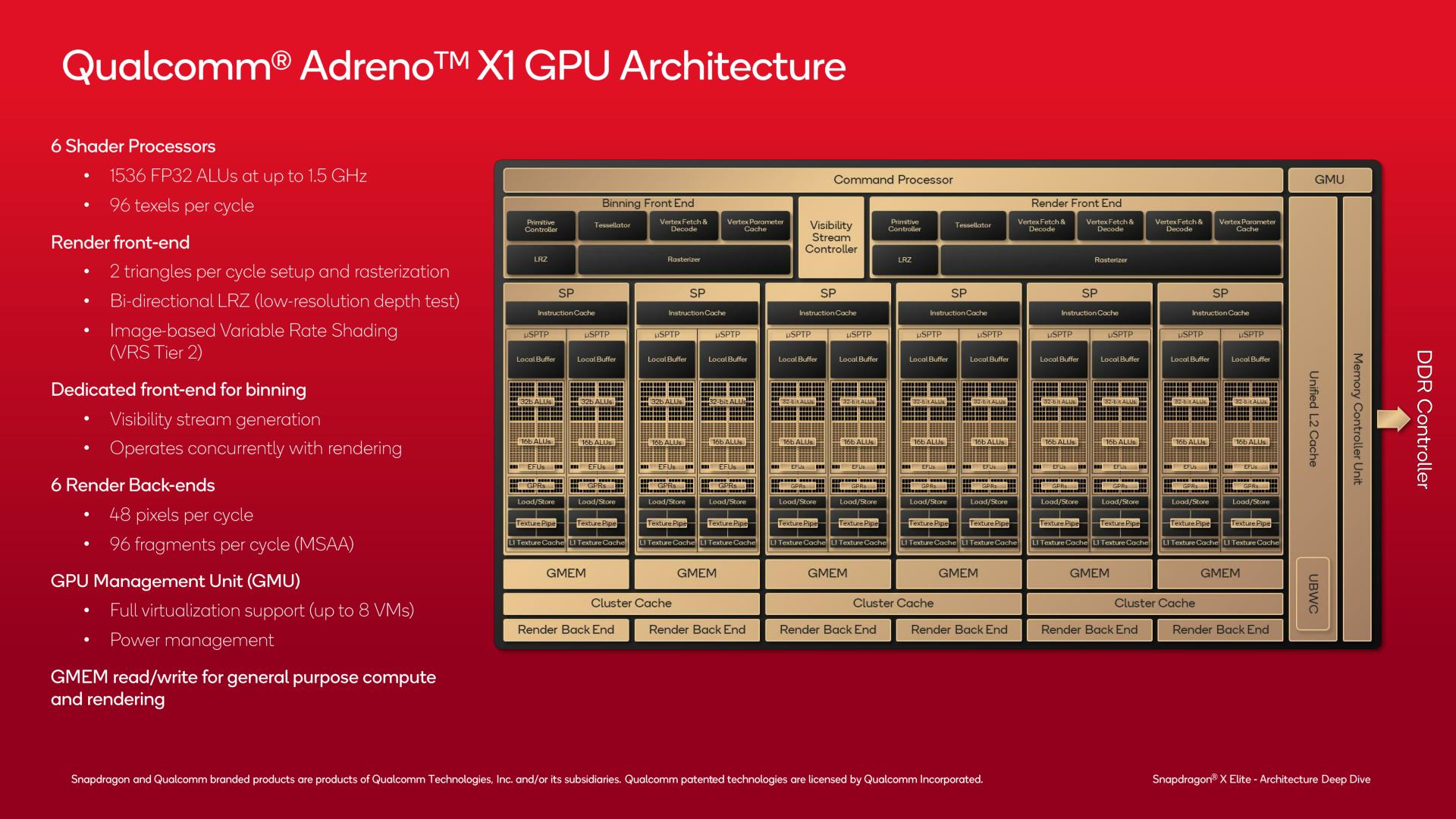

The Adreno X1 GPU is split up into 6 shader processor (SP) blocks, each offering 256 FP32 ALUs for a total of 1536 ALUs. With a peak clockspeed of 1.5GHz, this gives the integrated GPU on Snapdragon X a maximum throughput of 4.6 TFLOPS (with lesser amounts for lower-end SKUs).

Split up into the traditional front-end/SP/back-end setup we see with other GPUs, the front-end of the GPU handles triangle setup and rasterization, as well as binning for the GPU’s tile-based rendering mode. Of note, the GPU front-end can setup and raster 2 tringles per clock, which is not going to turn any heads in the PC space in 2024, but is respectable for an integrated GPU. Boosting its performance, the front-end can also do early depth testing to reject polygons that will never be visible before they are even rasterized.

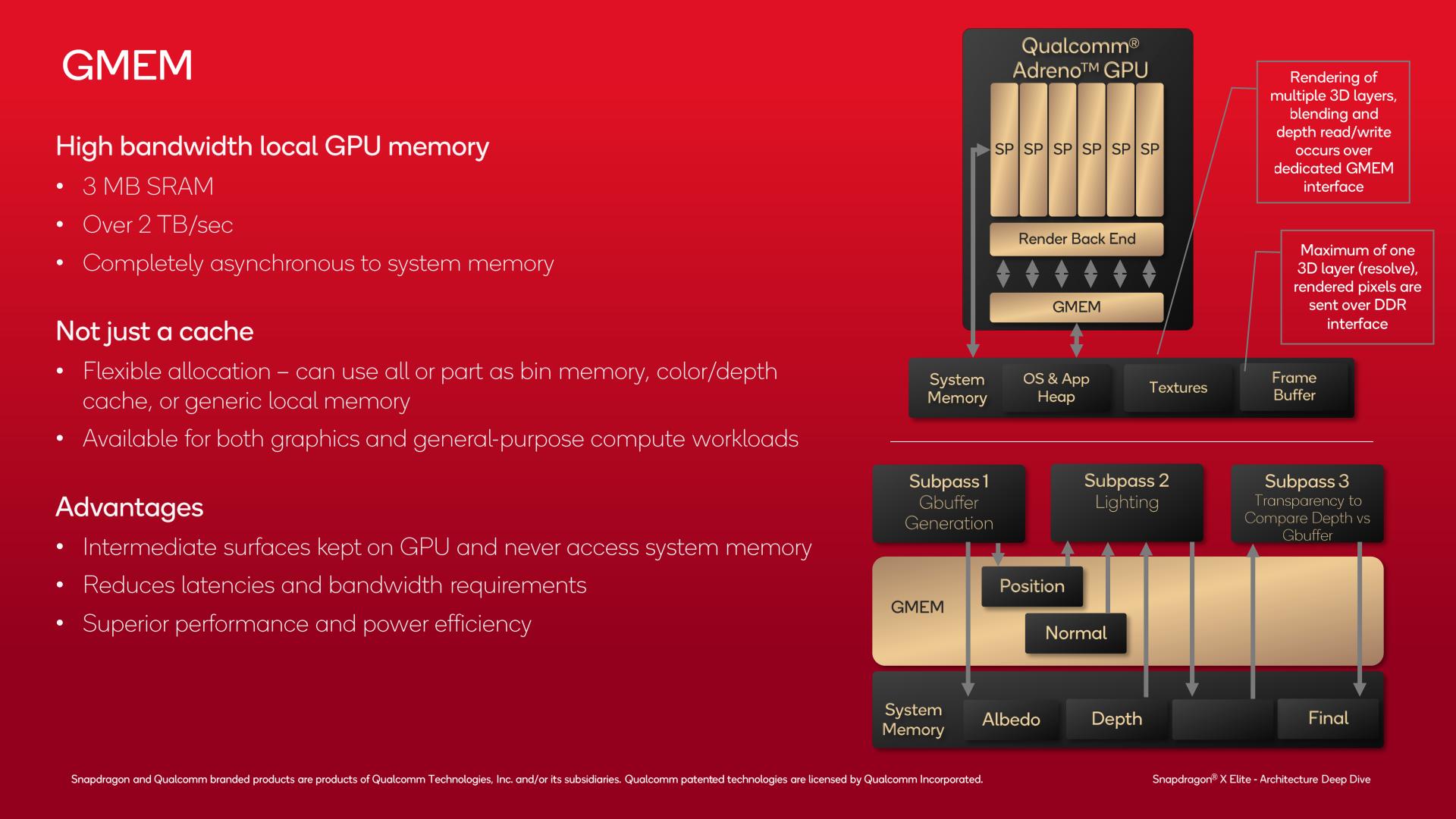

Meanwhile the back-end is made from 6 render output units (ROPs), which can process 8 pixels per cycle each, for a total of 48 pixels/clock rendered. The render back-ends are plugged in to a local cache, as well as an important scratchpad memory that Qualcomm calls GMEM (more on this in a bit).

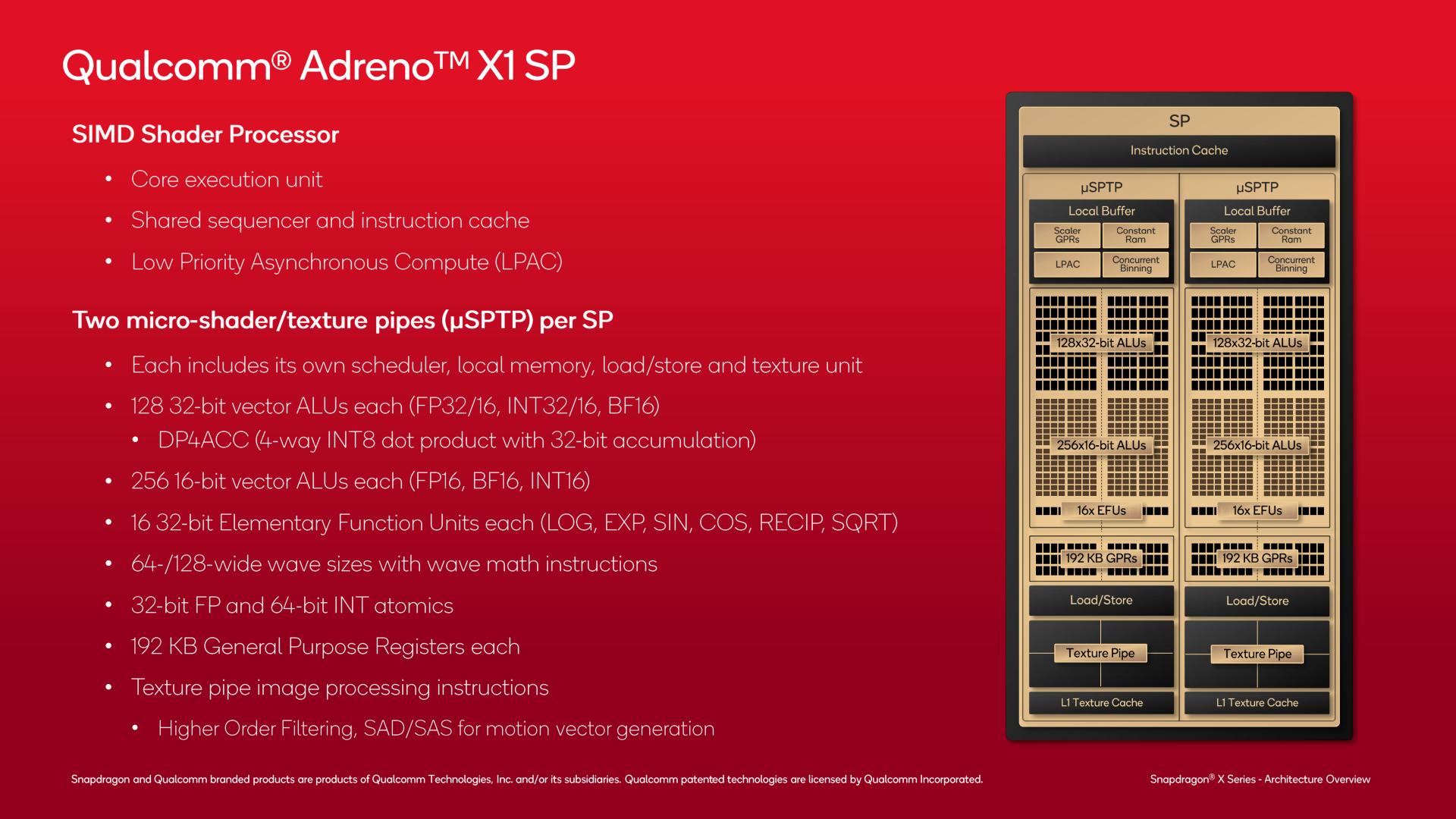

The individual shader processor blocks themselves are relatively customary, especially if you’ve seen an NVIDIA GPU architecture diagram. Each SP is further subdivided into two micro-pipes (micro shader pipe texture pipe, or uSPTP), which is helmed by its own dedicated scheduler and other resources such as local memory, load/store units, and texture units.

Each uSPTP offers 128 FP32 ALUs. And, in a bit of a surprise, there is also a separate set of 256 FP16 ALUs, meaning that Adreno X1 doesn’t have to share resources when processing FP16 and FP32 data, unlike architectures which execute FP16 operations on FP32 ALUs. Though for good measure, the FP32 units can be used for FP16 operations as well, if the GPU scheduler determines it’s needed.

Finally, there are 16 elementary functional units (EFUs), which handle transcendental functions such as LOG, SQRT, and other rare (but important) mathematical functions.

Surprisingly here, the Adreno X1 uses a rather large wavefront size. Depending on the mode, Qualcomm uses either 64 or 128 lane wide waves, with Qualcomm telling us that they typically use 128-wide wavefronts for 16bit operations such as fragment shaders, while 64-wide wavefronts are used for 32bit operations (e.g. pixel shaders).

Comparatively, AMD’s RDNA architectures use 32/64 wide wavefronts, and NVIDIA’s wavefronts/warps are always 32 wide. Wide designs have fallen out of favor in the PC space due to the difficulty in keeping them fed (too much divergence), so this is interesting to see. Ultimately, despite the usual wavefront size concerns, it seems to be working well for Qualcomm given the high GPU performance of their smartphone SoCs – no small feat given the high resolution of phone screens.

ALUs aside, each uSPTP includes their own texture units, which are capable of spitting out 8 texels per clock per uSPTP. There’s also limited image processing functionality here, including texture filtering, and even SAD/SAS instructions for generating motion vectors.

Finally, there’s quite a bit of register space within each uSPTP. Along with the L1 texture cache, there’s a total of 192KB of general purpose registers to keep the various blocks fed and to try to hide latency bubbles in the wavefronts.

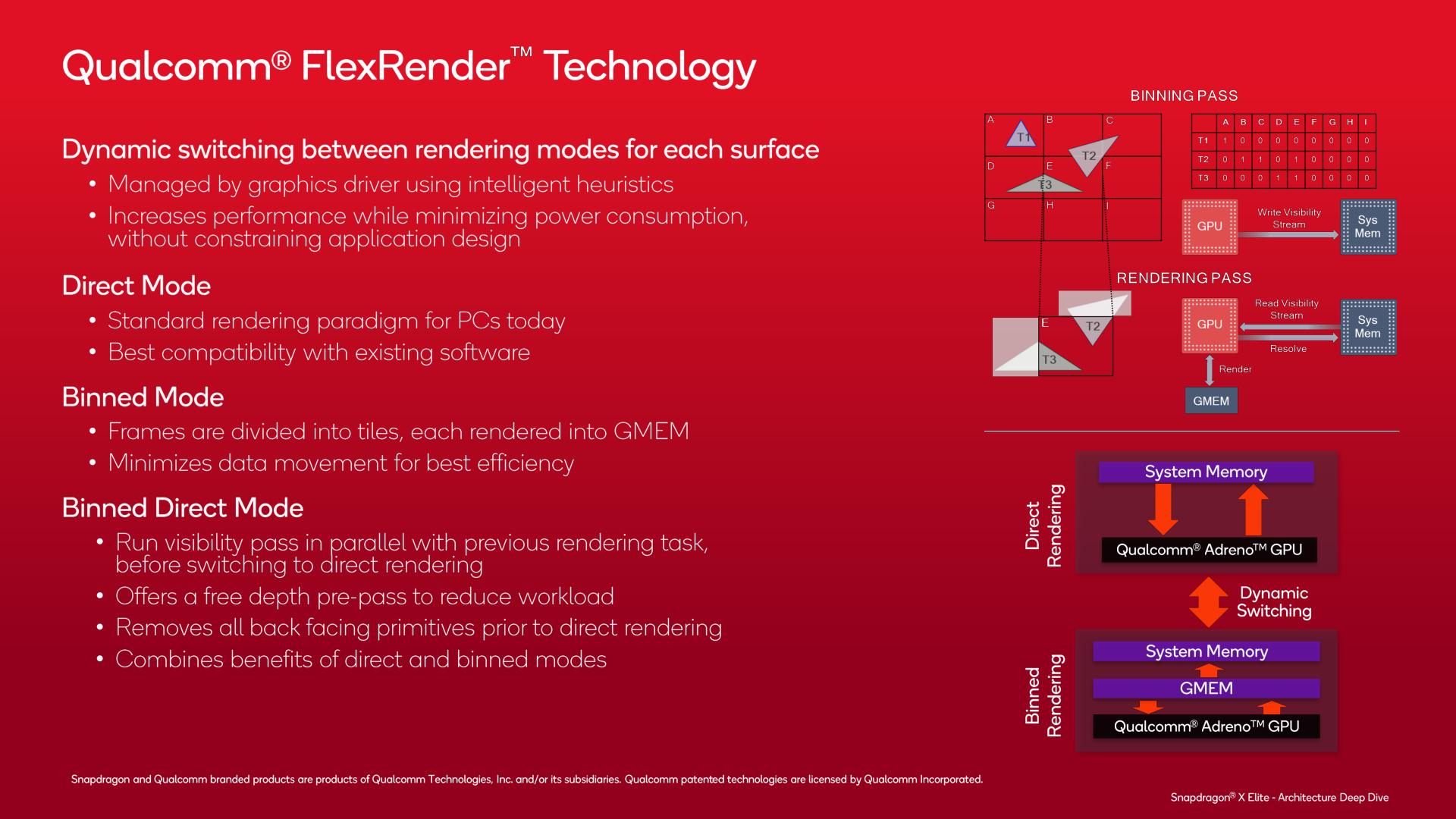

As noted earlier, the Adreno X1 supports multiple rendering modes in order to get the best performance possible, which the company calls their FlexRender technology. This is a subject that doesn’t come up too often with PC GPU designs, but is of greater importance in the mobile space for historical and efficiency reasons.

Besides the traditional direct/immediate mode rendering method (the typical mode for most PC GPUs), Qualcomm also supports tile-based rendering, which they call binned mode. As with other tile-based renderers, binned mode splits a screen up into multiple tiles, and then renders each one separately. This allows the GPU to only work on a subset of data at once, keeping most of that data in its local caches and minimizing the amount of traffic that goes to DRAM, which is both power-expensive and performance-constricting.

And finally, Adreno X1 has a third mode that combines the best of binned and direct rendering, which they call binned direct mode. This mode runs a binned visibility pass before switching to direct rendering, as a means to further cull back-facing (non-visible) triangles so that they don’t get rastered. Only after that data is culled does the GPU then switch over to direct rendering mode, now with a reduced workload.

Key to making the binned rendering modes work is the GPU’s GMEM, a 3MB SRAM block that serves as a very high bandwidth scratch pad for the GPU. Architecturally, GMEM is more than a cache, as it’s decoupled from the system memory hierarchy, and the GPU can do virtually anything it wants with the memory (including using it as a cache, if need be).

At 3MB in size, the GMEM block is not very large overall. But that’s big enough to store a tile – and thus prevent a whole lot of traffic from hitting the system memory. And it’s fast, too, with 2.3TB/second of bandwidth, which is enough bandwidth to allow the ROPs to run at full-tilt without being constrained by memory bandwidth.

With the GMEM block in place, in an ideal scenario, the GPU only needs to write out to the DRAM once per title, when it finishes rendering said tile. In practice, of course, there ends up being more DRAM traffic than that, but this is one of Qualcomm’s key features for avoiding chewing up memory bandwidth and power with GPU writes to DRAM.

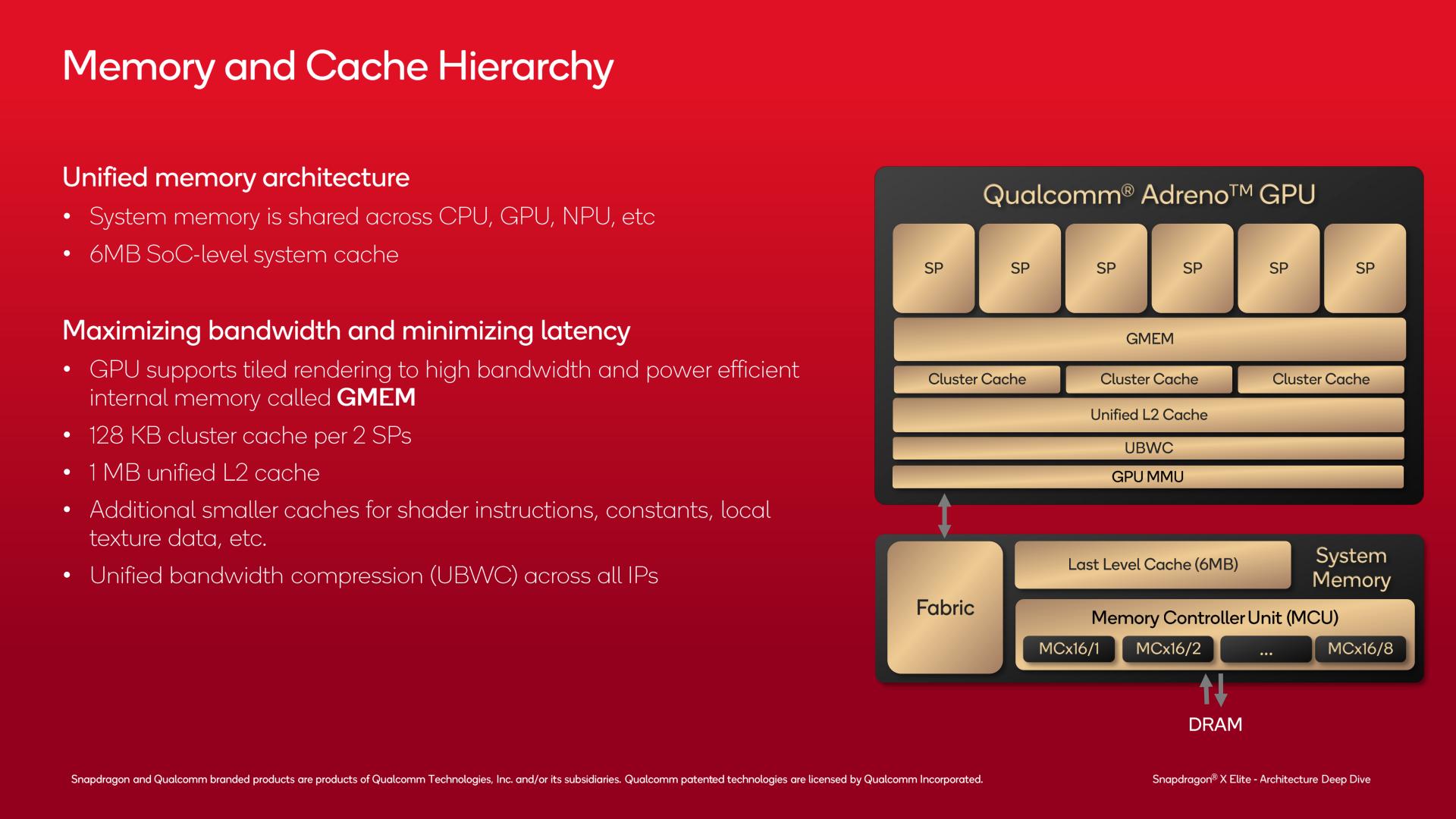

And when the Adreno X1 does need to go to system memory, it will go through its own remaining caches, before finally reaching the Snapdragon X’s shared memory controller.

Above the GMEM, there is a 128KB cluster cache for each pair of SPs (for 384KB in total for a full Snapdragon X). And above that still is a 1MB unified L2 cache for the GPU.

Finally, this leaves the system level cache (L3/SLC), which serves all of the processing blocks on the GPU. And when all else fails, there is the DRAM.

On a concluding note, while Qualcomm doesn’t have a dedicated slide for this, it’s interesting to note that the Adreno X1 GPU also includes a dedicated RISC controller within the GPU which serves as a GPU Management Unit (GMU). The GMU provides several features, the most important of which is power management within the GPU. The GMU works in concert with power management requests elsewhere in the SoC, allowing the chip to reallocate power between the different blocks depending on what the SoC decides is the most performant allocation method.

52 Comments

View All Comments

Terry_Craig - Thursday, June 13, 2024 - link

Almost a year and all Qualcomm throws at the public is more slides and marketing material? Where are the third-party reviews?Ryan Smith - Thursday, June 13, 2024 - link

Next week.mukiex - Thursday, June 13, 2024 - link

Ryan, I legit appreciate the "A Note on x86 Emulation" section. Literally answered the biggest questions I had on this that nobody else has covered, as far as I can tell. 10/10.Terry_Craig - Thursday, June 13, 2024 - link

Finally... Finally... I was also tired of just seeing marketing stuffabufrejoval - Thursday, June 20, 2024 - link

I think the usual benchmark stuff has been covered sufficiently by now.The only remaining question for me is battery life and there specifically where it might really differ from existing x86 laptops.

From what I remember reading the SoC itself has become somewhat of a minor energy consumption player on light desktop loads, which is how most professional computer users spend time in front of a screen. So I don't even know if there is enough wiggle room for a 2-3x battery time improvement without going to passive displays and really slow low-power storage.

What it comes down to is mostly this question: do I need to take the charger into my all-day meeting or can I even leave it at home when I go on a week-long busines trip?

And can I forget about my power button actually be a power button like on my phone, where most of the time it's used for things like camera control.

Pretty sure it won't be the week-long trip just yet, but not bothering with chargers for all-day meetings seems to have been a major win for the fruity cult.

And somewhere in between those two is the question of how low the Snapdragons will go in lid-down mode, which could be suspend to RAM or even some usable kind of modern standby where e.g. voice commands or messenger actions might still be processed with something ultra-low power. And there the main quality benchmark would be how quickly you can resume normal operations without e.g. draining 10 minutes of idle battery for one resume.

From what I read between the lines of your deep dive, the granularity at which large swaths of the SoCs transistors could go and remain dark might be vastly better than with x86 designs and their iGPUs. And its the ability to tickle adequate responsiveness from phone wattages that would make a Snapdragon PC worth having, not it's ability to beat x86 on Cinebench.

Cinebench on an RTX 4090 makes it rather clear that any investment into CPU power for that use case is plain folly.

TheProv - Monday, July 1, 2024 - link

Hey Ryan are you guys doing a review?yeeeeman - Thursday, June 13, 2024 - link

yeah, also getting a bit tired of this hype hype hype.they kinda did this in their disadvantage tbh.

meacupla - Thursday, June 13, 2024 - link

They are launching on June 18th, so the review embargo probably lifts on the 17th or 18th.Dante Verizon - Tuesday, June 18, 2024 - link

Apparently not.shabby - Thursday, June 13, 2024 - link

Some benchmarks are leaking out, prepare to be disappointed https://www.tomshardware.com/laptops/snapdragon-x-...