Gigabyte GA-7PESH1 Review: A Dual Processor Motherboard through a Scientist’s Eyes

by Ian Cutress on January 5, 2013 10:00 AM EST- Posted in

- Motherboards

- Gigabyte

- C602

Explicit Finite Difference

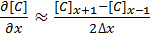

For a grid of points or nodes over a simulation space, each point in the space can describe a number of factors relating to the simulation – concentration, electric field, temperature, and so on. In terms of concentration, the material balance gradients are approximated to the differences in the concentrations of surrounding points. Consider the concentration gradient of species C at point x in one dimension, where [C]x describes the concentration of C at x:

[1]

[1]

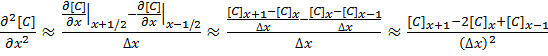

The second derivative around point x is determined by combining the half-differences from the adjacent half-points next to x:

[2]

[2]

Equations [1] and [2] can be applied to the partial differential equation [3] below to reveal a set of linear equations which can be solved.

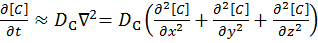

Fick’s first law for the rate of diffusional mass transport can be applied in three dimensions:

[3]

[3]

where D is the diffusion coefficient of the chemical species, and t is the time. For the dimensional analyses used in this work, the Laplacian is split over the Cartesian dimensions x, y and z.

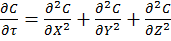

Dimension transformations are often employed to these simulations to relieve the simulation against scaling factors. The expansion of equation [3] given specific dimension transforms not mentioned here give equation [4] to be solved.

[4]

[4]

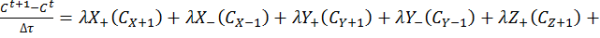

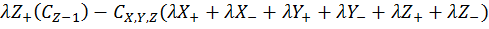

The expansion of equation [4] using coefficient collation and expansion by finite differences mentioned in equations [1] and [2] lead to equation [5]:

Equation [5] represents a series of concentrations that can be calculated independently from each other – each concentration can now be solved for each timestep (t) by an explicit algorithm for t+1 from t.

The explicit algorithm is uncommonly used in electrochemical simulation, often due to stability constraints and time taken to simulate. However, it offers complete parallelisation and low thread density – ideal for multi-processor systems and graphics cards – such that these issues should be overcome.

The explicit algorithm is stable when, for equation [4], the Courant–Friedrichs–Lewy condition [6] holds.

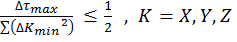

[6]

[6]

Thus the upper bound on the time step is fixed given the minimum grid spacing used.

There are variations of the explicit algorithm to improve this stability, such as the Dufort-Frankel method of considering the concentration at each point as the linear function of time, such that:

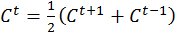

[7]

[7]

However, this method requires knowledge of the concentrations of two steps before the current step, therefore doubling memory usage.

Application to this Review

For the purposes of this review, we generate an x-by-x / x-by-x-by-x grid of points with a relative concentration of 1, where the boundaries are fixed at a relative concentration of 0. The grid is a regular grid, simplifying calculations. As each node in the grid is independently calculable from each other, it would be ideal to spawn as many threads as there are nodes. However, each node has to load the data of the nodes of the previous time step around it, thus we restrict parallelization in one dimension and use an iterative loop to restrict memory loading and increase simulation throughput.

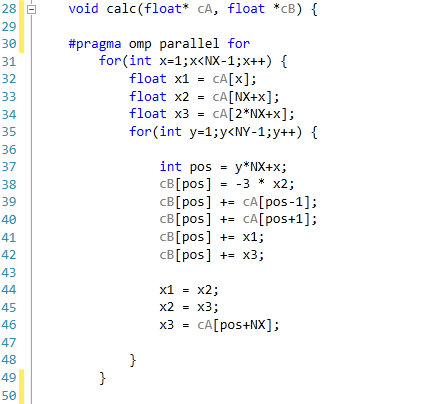

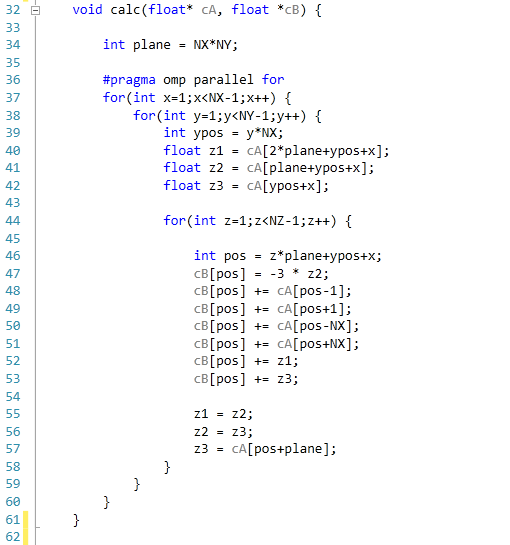

The code was written in Visual Studio C++ 2012 with OpenMP as the multithreaded source. The main functions to do the calculations are as follows.

For 2D:

For 3D:

For our scores, we increase the size of the grid from a 2x2 or 2x2x2 until we hit 2GB memory usage. At each stage, the time taken to repeatedly process the grid over many time steps is calculated in terms of ‘million nodes per second’, and the peak value is used for our results.

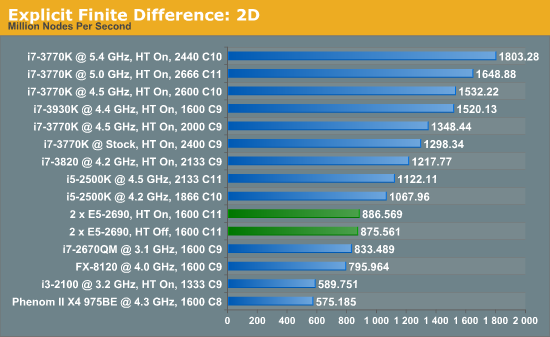

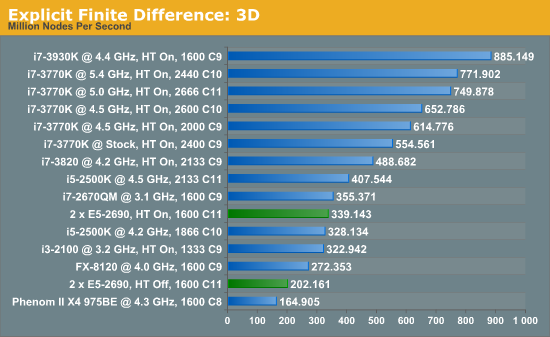

Firstly, the results for our 2D and 3D Explicit Finite Difference simulations are shocking. In 3D, the dual processor system gets beaten by a mobile i7-2670QM (!). In both 2D and 3D, it seems being able to quickly access main memory and L3 caches is top priority. Each processor in the DP machine has to go out to memory when they need a value not in cache, whereas in a single processor machine it can access the L3 cache if the simulation fits wholly in there and the processor can predict which numbers are required next.

Also it should be noted that enabling HyperThreading in 3D gave a better than 50% increase in throughput on the dual processor machine.

[5]

[5]

64 Comments

View All Comments

Hulk - Saturday, January 5, 2013 - link

I had no idea you were so adept with mathematics. "Consider a point in space..." Reading this brought me back to Finite Element Analysis in college! I am very impressed. Being a ME I would have preferred some flow models using the Navier-Stokes equations, but hey I like chemistry as well.IanCutress - Saturday, January 5, 2013 - link

I never did any FEM so wouldn't know where to start. The next angle of testing would have been using a C++ AMP Fluid Dynamics Simulation and adjusting the code from the SDK example like with the n-Body testing. If there is enough interest, I could spend a few days organising it for the normal motherboard reviews :)Ian

mayankleoboy1 - Saturday, January 5, 2013 - link

How the frick did you get the i7-3770K to *5.4GHZ* ? :shock:How the frick did you get the i7-3770K to *5.0GHZ* ? :shock:

IanCutress - Saturday, January 5, 2013 - link

A few members of the Overclock.net HWBot team helped testing by running my benchmark while they were using DICE/LN2/Phase Change for overclocking contests (i.e. not 24/7 runs). The i7-3770K will go over 7 GHz if (a) you get a good chip, (b) cool it down enough, and (c) know what you are doing. If you're interested in competitive overclocking, head over to HWBot, Xtreme Systems or Overclock.net - there are plenty of people with info to help you get started.Ian

JlHADJOE - Tuesday, January 8, 2013 - link

The incredible performance of those overclocked Ivy bridge systems here really hammers home the importance of raw IPC. You can spend a lot of time optimizing code, but IPC is free speed when it's available.jd_tiger - Saturday, January 5, 2013 - link

http://www.youtube.com/watch?v=Ccoj5lhLmSQsmonsees - Saturday, January 5, 2013 - link

You might try modifying your algorithm to pin the data to a specific core (therefore cache) to keep the thrashing as low as possible. Google "processor affinity c++". I will admit this adds complexity to your straightforward algorithm. In C#, I would use a parallel loop with a range partition to do it as a starting point: http://msdn.microsoft.com/en-us/library/dd560853.a...nickgully - Saturday, January 5, 2013 - link

Mr. Cutress,Do you think with all the virtualized CPU available, researchers will still build their own system as it is something concrete to put into a grant application, versus the power-by-the-hour of cloud computing?

Thanks.

IanCutress - Saturday, January 5, 2013 - link

We examined both scenarios. Our university had cluster time to buy, and there is always the Amazon cloud. In our calculation, getting a 16 thread machine from Dell paid for itself in under six months of continuous running, and would not require a large adjustment in the way people were currently coding (i.e. staying in Windows rather than moving to Linux), and could also be passed down the research group when newer hardware is released.If you are using production level code and manipulating it each time to get results, and you can guarantee the results will be good each time, then power-by-the-hour could work. As we were constantly writing and testing new code for different scenarios, the build/buy your own workstation won out. Having your own system also helps in building GPU codes, if you want to buy a better GPU card it is easier to swap out rather than relying on a cloud computing upgrade.

Ian

jtv - Sunday, January 6, 2013 - link

One big consideration is who the researchers are. I work in x-ray spectroscopy (as a computational theorist). Experimentalists in this field use some of our codes without wanting to bother with having big computational resources. We have looked at trying to provide some of our codes through some cloud-based service so that it can be used on demand.Otherwise I would agree with Ian's reply. When I'm improving code, debugging code, or trying to implement new theoretical approaches I absolutely want my own hardware to do it on.