Gigabyte GA-7PESH1 Review: A Dual Processor Motherboard through a Scientist’s Eyes

by Ian Cutress on January 5, 2013 10:00 AM EST- Posted in

- Motherboards

- Gigabyte

- C602

For the purpose of this review, I delved into C++ AMP as a natural extension to my GPU programming experience. For users wanting to go down the GPU programming route, C++ AMP is a great way to get involved. As a high level language it is easy enough to learn, and the book on sale as well as the MSDN blogs online are also very helpful, moreso perhaps than CUDA.

Part of the available code online for C++ AMP revolves around n-body simulations, as the basis of an n-body simulation maps nicely to parallel processors such as multi-CPU platforms and GPUs. For this review, I was able to strip out the code from the n-body example provided and run some numbers. Many thanks to Boby George and Jonathan Emmett from Microsoft for their help.

The n-Body Simulation

When a series of heavy mass elements are in space, they interact with each other through the force of gravity. Thus when a star cluster forms, the interaction of every large mass with every other large mass defines the speed at which these elements approach each other. When dealing with millions and billions of stars on such a large scale, the movement of each of these stars can be simulated through the physical theorems that describe the interactions.

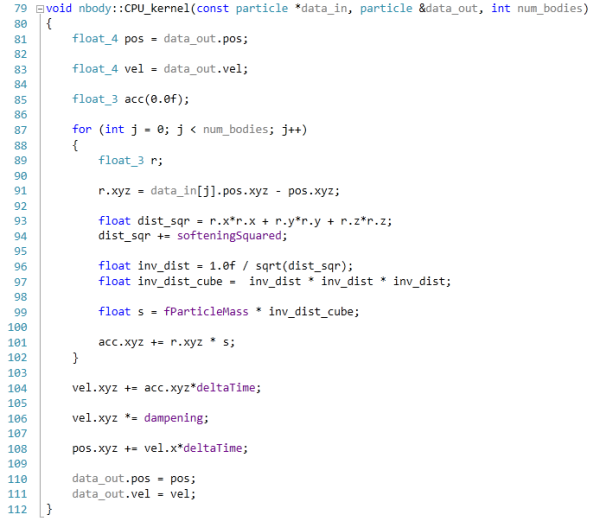

n-Body simulation is a large field of calculation with many different computational methods optimized for speed, memory usage or bus transfer – this is on top of the different algorithms that can be used to represent such a scenario. Typically one might expect the running time of a simulation be O(n^2) as each particle in the simulation has to interact gravitationally with every other particle, but some computational methods can be used to reduce this as the effect of gravity is inversely proportional to the square of the distance, and thus only the localized area needs to be known. Other complex solutions deal with general relativity. I am neither an expert in gravity simulations or relativity, but the solution used today is the full O(n^2) solution.

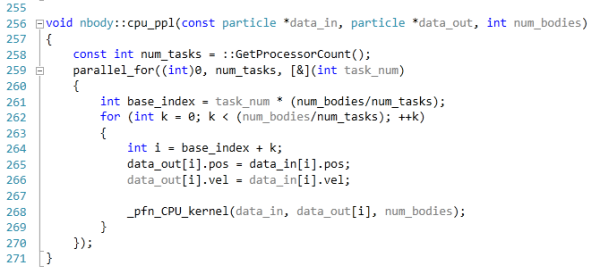

The code provided detects whether the processor is SSE2 or SSE4 capable, and implements the relative code. Here is an example of the multi-CPU code, using the PPL library, and the non-SSE enabled function:

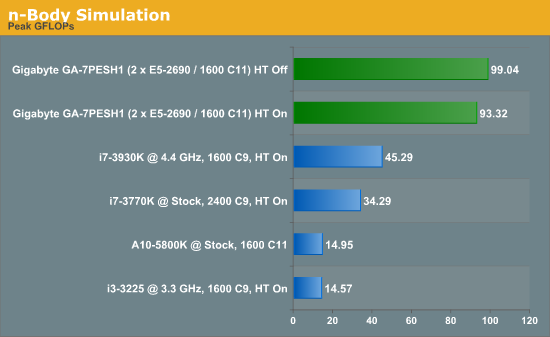

This code is run using a simulation of 10240 particles of equal mass. The output for this code is in terms of GFLOPs, and the result recorded was the peak GFLOPs value.

In the case of our dual processor system, disabling HyperThreading gives a modest 6% boost, suggesting that the cache sizes of the processors used are slightly too small. Note that for this simulation, the data of every particle is stored in as low cache as possible, then read by each particle, and the main write is pushed out to main memory. Then for the next step, a copy of main memory is again made to the L3 cache of each processor and the process repeated. For this type of task, the dual processor systems are ideal, but like the Brownian motion simulation, moving them onto a GPU gets an even better result (700 GFLOPs on a GTX560).

64 Comments

View All Comments

Hulk - Saturday, January 5, 2013 - link

I had no idea you were so adept with mathematics. "Consider a point in space..." Reading this brought me back to Finite Element Analysis in college! I am very impressed. Being a ME I would have preferred some flow models using the Navier-Stokes equations, but hey I like chemistry as well.IanCutress - Saturday, January 5, 2013 - link

I never did any FEM so wouldn't know where to start. The next angle of testing would have been using a C++ AMP Fluid Dynamics Simulation and adjusting the code from the SDK example like with the n-Body testing. If there is enough interest, I could spend a few days organising it for the normal motherboard reviews :)Ian

mayankleoboy1 - Saturday, January 5, 2013 - link

How the frick did you get the i7-3770K to *5.4GHZ* ? :shock:How the frick did you get the i7-3770K to *5.0GHZ* ? :shock:

IanCutress - Saturday, January 5, 2013 - link

A few members of the Overclock.net HWBot team helped testing by running my benchmark while they were using DICE/LN2/Phase Change for overclocking contests (i.e. not 24/7 runs). The i7-3770K will go over 7 GHz if (a) you get a good chip, (b) cool it down enough, and (c) know what you are doing. If you're interested in competitive overclocking, head over to HWBot, Xtreme Systems or Overclock.net - there are plenty of people with info to help you get started.Ian

JlHADJOE - Tuesday, January 8, 2013 - link

The incredible performance of those overclocked Ivy bridge systems here really hammers home the importance of raw IPC. You can spend a lot of time optimizing code, but IPC is free speed when it's available.jd_tiger - Saturday, January 5, 2013 - link

http://www.youtube.com/watch?v=Ccoj5lhLmSQsmonsees - Saturday, January 5, 2013 - link

You might try modifying your algorithm to pin the data to a specific core (therefore cache) to keep the thrashing as low as possible. Google "processor affinity c++". I will admit this adds complexity to your straightforward algorithm. In C#, I would use a parallel loop with a range partition to do it as a starting point: http://msdn.microsoft.com/en-us/library/dd560853.a...nickgully - Saturday, January 5, 2013 - link

Mr. Cutress,Do you think with all the virtualized CPU available, researchers will still build their own system as it is something concrete to put into a grant application, versus the power-by-the-hour of cloud computing?

Thanks.

IanCutress - Saturday, January 5, 2013 - link

We examined both scenarios. Our university had cluster time to buy, and there is always the Amazon cloud. In our calculation, getting a 16 thread machine from Dell paid for itself in under six months of continuous running, and would not require a large adjustment in the way people were currently coding (i.e. staying in Windows rather than moving to Linux), and could also be passed down the research group when newer hardware is released.If you are using production level code and manipulating it each time to get results, and you can guarantee the results will be good each time, then power-by-the-hour could work. As we were constantly writing and testing new code for different scenarios, the build/buy your own workstation won out. Having your own system also helps in building GPU codes, if you want to buy a better GPU card it is easier to swap out rather than relying on a cloud computing upgrade.

Ian

jtv - Sunday, January 6, 2013 - link

One big consideration is who the researchers are. I work in x-ray spectroscopy (as a computational theorist). Experimentalists in this field use some of our codes without wanting to bother with having big computational resources. We have looked at trying to provide some of our codes through some cloud-based service so that it can be used on demand.Otherwise I would agree with Ian's reply. When I'm improving code, debugging code, or trying to implement new theoretical approaches I absolutely want my own hardware to do it on.