The OCZ Vertex 3 Review (120GB)

by Anand Lal Shimpi on April 6, 2011 6:32 PM ESTThe NAND Matrix

It's not common for SSD manufacturers to give you a full list of all of the different NAND configurations they ship. Regardless how much we appreciate transparency, it's rarely offered in this industry. Manufacturers love to package all information into nice marketable nuggets and the truth doesn't always have the right PR tone to it. Despite what I just said, below is a table of every NAND device OCZ ships in its Vertex 2 and Vertex 3 products:

| OCZ Vertex 2 & Vertex 3 NAND Usage | ||||

| Process Node | Capacities | |||

| Intel L63B | 34nm | Up to 240GB | ||

| Micron L63B | 34nm | Up to 480GB | ||

| Spectek L63B | 34nm | 240GB to 360GB | ||

| Hynix | 32nm | Up to 120GB | ||

| Micron L73A | 25nm | Up to 120GB | ||

| Micron L74A | 25nm | 160GB to 480GB | ||

| Intel L74A | 25nm | 160GB to 480GB | ||

The data came from OCZ and I didn't have to sneak around to get it, it was given to me by Alex Mei, Executive Vice President of OCZ.

You've seen the end result, now let me explain how we got here.

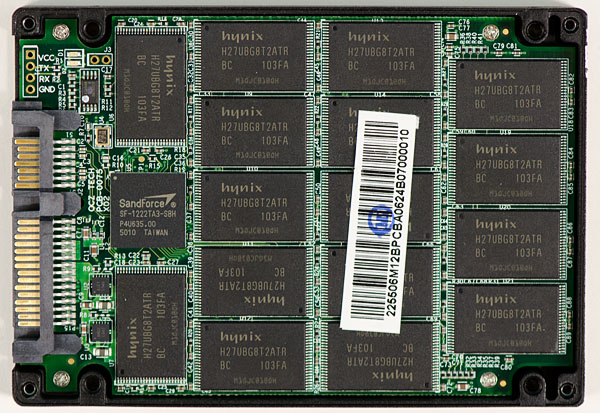

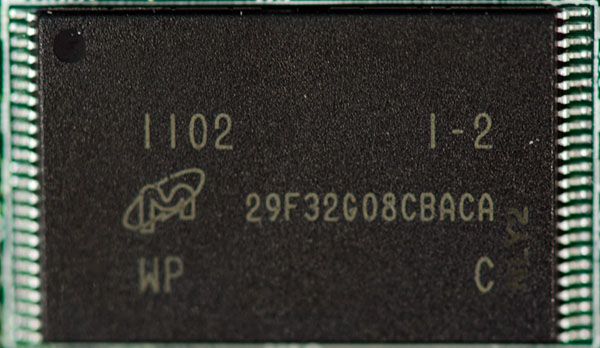

OCZ accidentally sent me a 120GB Vertex 2 built with 32nm Hynix NAND. I say it was an accident because the drive was supposed to be one of the new 25nm Vertex 2s, but there was a screwup in ordering and I ended up with this one. Here's a shot of its internals:

You'll see that there are a ton of NAND devices on the board. Thirty two to be exact. That's four per channel. Do the math and you'll see we've got 32 x 4GB 32nm MLC NAND die on the PCB. This drive has the same number of NAND die per package as the new 25nm 120GB Vertex 2 so in theory performance should be the same. It isn't however:

| Vertex 2 NAND Performance Comparison | ||||

| AT Storage Bench Heavy 2011 | AT Storage Bench Light 2011 | |||

| 34nm IMFT | 120.1 MB/s | 155.9 MB/s | ||

| 25nm IMFT | 110.9 MB/s | 145.8 MB/s | ||

| 32nm Hynix | 92.1 MB/s | 125.6 MB/s | ||

Performance is measurably worse. You'll notice that I also threw in some 34nm IMFT numbers to show just how far performance has fallen since the old launch NAND.

Why not just keep using 34nm IMFT NAND? Ultimately that product won't be available. It's like asking for 90nm CPUs today, the whole point to Moore's Law is to transition to smaller manufacturing processes as quickly as possible.

Why is the Hynix 32nm NAND so much slower? That part is a little less clear to me. For starters we're only dealing with one die per package, we've established can have a negative performance impact. On top of that, SandForce's firmware may only be optimized for a couple of NAND devices. OCZ admitted that around 90% of all Vertex 2 shipments use Intel or Micron NAND and as a result SandForce's firmware optimization focus is likely targeted at those NAND types first and foremost. There are differences in NAND interfaces as well as signaling speeds which could contribute to performance differences unless a controller takes these things into account.

The 25nm NAND is slower than the 34nm offerings for a number of reasons. For starters page size increased from 4KB to 8KB with the transition to 25nm. Intel used this transition as a way to extract more performance out of the SSD 320, however that may have actually impeded SF-1200 performance as the firmware architecture wasn't designed around 8KB page sizes. I suspect SandForce just focused on compatibility here and not performance.

Secondly, 25nm NAND is physically slower than 34nm NAND:

| NAND Performance Comparison | ||||

| Intel 34nm NAND | Intel 25nm NAND | |||

| Read | 50 µs | 50 µs | ||

| Program | 900 µs | 1200 µs | ||

| Block Erase | 2 µs | 3 µs | ||

Program and erase latency are both higher, although admittedly you're working with much larger page sizes (it's unclear whether Intel's 1200 µs figure is for a full page program or a partial program).

The bad news is that eventually all of the 34nm IMFT drives will dry up. The worse news is that the 25nm IMFT drives, even with the same number of NAND devices on board, are lower in performance. And the worst news is that the drives that use 32nm Hynix NAND are the slowest of them all.

I have to mention here that this issue isn't exclusive to OCZ. All other SF drive manufacturers are faced with the same potential problem as they too must shop around for NAND and can't guarantee that they will always ship the same NAND in every single drive.

The Problem With Ratings

You'll notice that although the three NAND types I've tested perform differently in our Heavy 2011 workload, a quick run through Iometer reveals that they perform identically:

| Vertex 2 NAND Performance Comparison | ||||

| AT Storage Bench Heavy 2011 | Iometer 128KB Sequential Write | |||

| 34nm IMFT | 120.1 MB/s | 214.8 MB/s | ||

| 25nm IMFT | 110.9 MB/s | 221.8 MB/s | ||

| 32nm Hynix | 92.1 MB/s | 221.3 MB/s | ||

SandForce's architecture works by reducing the amount of data that actually has to be written to the NAND. When writing highly compressible data, not all NAND devices are active and we're not bound by the performance of the NAND itself since most of it is actually idle. SandForce is able to hide even significant performance differences between NAND implementations. This is likely why SandForce is more focused on NAND compatibility than performance across devices from all vendors.

Let's see what happens if we write incompressible data to these three drives however:

| Vertex 2 NAND Performance Comparison | ||||

| Iometer 128KB Sequential Write (Incompressible Data) | Iometer 128KB Sequential Write | |||

| 34nm IMFT | 136.6 MB/s | 214.8 MB/s | ||

| 25nm IMFT | 118.5 MB/s | 221.8 MB/s | ||

| 32nm Hynix | 95.8 MB/s | 221.3 MB/s | ||

It's only when you force SandForce's controller to write as much data in parallel as possible that you see the performance differences between NAND vendors. As a result, the label on the back of your Vertex 2 box isn't lying - whether you have 34nm IMFT, 25nm IMFT or 32nm Hynix the drive will actually hit the same peak performance numbers. The problem is that the metrics depicted on the spec sheets aren't adequate to be considered fully honest.

A quick survey of all SF-1200 based drives shows the same problem. Everyone rates according to maximum performance specifications and no one provides any hint of what you're actually getting inside the drive.

| SF-1200 Drive Rating Comparison | ||||

| 120GB Drive | Rated Sequential Read Speed | Rated Sequential Write Speed | ||

| Corsair Force F120 | 285 MB/s | 275 MB/s | ||

| G.Skill Phoenix Pro | 285 MB/s | 275 MB/s | ||

| OCZ Vertex 2 | Up to 280 MB/s | Up to 270 MB/s | ||

I should stop right here and mention that specs are rarely all that honest on the back of any box. Whether we're talking about battery life or SSD performance, if specs told the complete truth then I'd probably be out of a job. If one manufacturer is totally honest, its competitors will just capitalize on the aforementioned honesty by advertising better looking specs. And thus all companies are forced to bend the truth because if they don't, someone else will.

153 Comments

View All Comments

Xcellere - Wednesday, April 6, 2011 - link

It's too bad the lower capacity drives aren't performing as well as the 240 GB version. I don't have a need for a single high capacity drive so the expenditure in added space is unnecessary for me. Oh well, that's what you get for wanting bleeding-edge tech all the time.Kepe - Wednesday, April 6, 2011 - link

If I've understood correctly, they're using 1/2 of the NAND devices to cut drive capacity from 240 GB to 120 GB.My question is: why don't they use the same amount of NAND devices with 1/2 the capacity instead? Again, if I have understood correctly, that way the performance would be identical compared to the higher capacity model.

Is NAND produced in only one capacity packages or is there some other reason not to use NAND devices of differing capacities?

dagamer34 - Wednesday, April 6, 2011 - link

Because price scaling makes it more cost-effective to use fewer, more dense chips than separate smaller, less dense chips as the more chips made, the cheaper they eventually become.Like Anand said, this is why you can't just as for a 90nm CPU today, it's just too old and not worth making anymore. This is also why older memory gets more expensive when it's not massively produced anymore.

Kepe - Wednesday, April 6, 2011 - link

But couldn't they just make smaller dies? Just like there are different sized CPU/GPU dies for different amounts of performance. Cut the die size in half, fit 2x the dies per wafer, sell for 50% less per die than the large dies (i.e. get the same amount of money per wafer).A5 - Wednesday, April 6, 2011 - link

No reason for IMFT to make smaller dies - they sell all of the large dies coming out of the fab (whether to themselves or 3rd parties), so why bother making a smaller one?vol7ron - Wednesday, April 6, 2011 - link

You're missing the point on economies of scale.Having one size means you don't have leftover parts, or have to pay for a completely different process (which includes quality control).

These things are already expensive, adding the logistical complexity would only drive the prices up. Especially, since there are noticeable difference in the manufacturing process.

I guess they could take the poorer performing silicon and re-market them. Like how Anand mentioned that they take poorer performning GPUs and just sell them at a lower clockrate/memory capacity, but it could be that the NAND production is more refined and doesn't have that large of a difference.

Regardless, I think you mentioned the big point: inner RAIDs improve performance. Why 8 chips, why not more? Perhaps heat has something to do with it, and (of course) power would be the other reason, but it would be nice to see higher performing, more power-hungry SSDs. There may also be a performance benefit in larger chips too, though, sort of like DRAM where 1x2GB may perform better than 2x1GB (not interlaced).

I'm still waiting for the manufacturers to get fancy, perhaps with multiple controllers and speedier DRAM. Where's the Vertex3 Colossus.

marraco - Tuesday, April 12, 2011 - link

Smaller dies would improve yields, and since they could enable full speed, it would be more competitive.A bigger chip with a flaw may invalidate the die, but if divided in two smaller chips it would recover part of it.

On other side, probably yields are not as big problem, since bad sectors can be replaced with good ones by the controller.

Kepe - Wednesday, April 6, 2011 - link

Anand, I'd like to thank you on behalf of pretty much every single person on the planet. You're doing an amazing job with making companies actually care about their customers and do what is right.Thank you so much, and keep up the amazing work.

- Kepe

dustofnations - Wednesday, April 6, 2011 - link

Thank God for a consumer advocate with enough clout for someone important to listen to them.All too often valid and important complaints fall at the first hurdle due to dumb PR/CS people who filter out useful information. Maybe this is because they assume their customers are idiots, or that it is too much hassle, or perhaps don't have the requisite technical knowledge to act sensibly upon complex complaints.

Kepe - Wednesday, April 6, 2011 - link

I'd say the reason is usually that when a company has sold you its product, they suddenly lose all interest in you until they come up with a new product to sell. Apple used to be a very good example with its battery policy. "So, your battery died? We don't sell new or replace dead batteries, but you can always buy the new, better iPod."It's this kind of ignorance towards the consumers that is absolutely appalling, and Anand is doing a great job at fighting for the consumer's rights. He should get some sort of an award for all he has done.