Intel Architecture Day 2021: Alder Lake, Golden Cove, and Gracemont Detailed

by Dr. Ian Cutress & Andrei Frumusanu on August 19, 2021 9:00 AM ESTGracemont Microarchitecture (E-Core) Examined

The smaller core as part of Intel’s hybrid Alder Lake design is called an E-core, and is built on the Gracemont microarchitecture. It forms part of Intel’s Atom family of processors, and is a significant microarchitectural jump over the previous Atom core design called Tremont.

- 2008: Bonnell

- 2011: Saltwell

- 2013: Silvermont

- 2015: Airmont

- 2016: Goldmont

- 2017: Goldmont Plus

- 2020: Tremont

- 2021: Gracemont

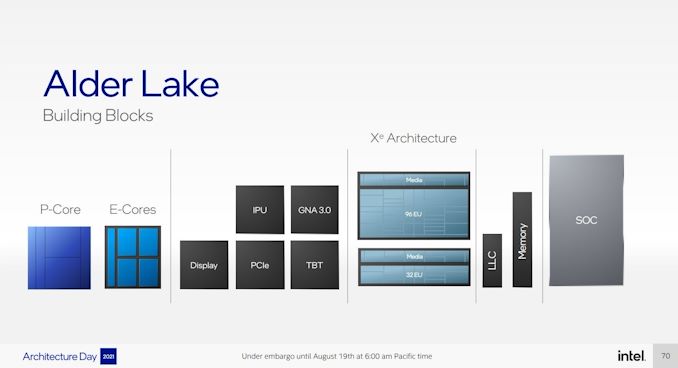

Based on Intel’s diagrams, the company is pitching that the size of its Golden Cove core means that in the space it would normally fit one of its P-core designs, it can enable a four core cluster of E-cores along with a shared 4MB L2 cache between them.

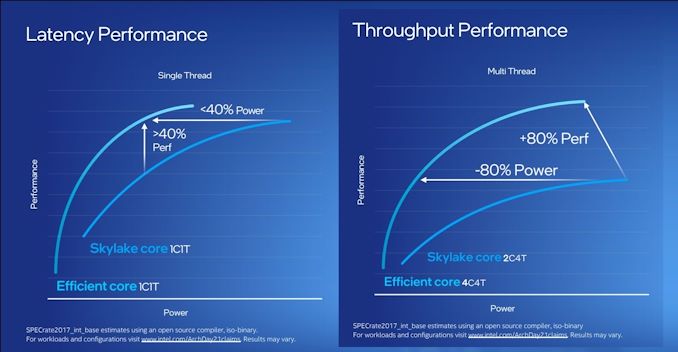

For performance, Intel has some pretty wild claims. It splits them up into single thread and multi-thread comparisons using SPECrate2017_int.

When comparing 1C1T of Gracemont against 1C1T of Skylake, Intel’s numbers suggest:

- +40% performance at iso-power (using a middling frequency)

- 40% less power* at iso-performance (peak Skylake performance)

*'<40%' is now stood to mean 'below 40 power'

When comparing 4C4T of Gracemont against 2C4T of Skylake, Intel’s numbers suggest:

- +80% performance peak vs peak

- 80% less power at iso performance)peak Skylake performance

We pushed the two Intel slides together to show how they presented this data.

By these graphics it looks like that for peak single thread, we should see around +8% better than Skylake performance while consuming just over half the power – look for Cinebench R20 scores for one Gracemont thread around 478 then (Skylake 6700K scored 443). With +8% for single thread in mind, the +80% in MT comparing 4 cores of Gracemont to two fully loaded Skylake cores seems a little low – we’ve got double the physical cores with Gracemont compared to Skylake here. But there’s likely some additional performance regression with the cache structure on the new Atom core, which we’ll get to later on this page.

These claims are substantial. Intel hasn’t compared the new Atom core generation on generation, because it felt that having AVX2 support would put the new Atom at a significant advantage. But what Intel is saying with these graphs is that we should expect better-than Skylake performance at much less power. We saw Skylake processors up to 28 cores in HEDT – it makes me wonder if Intel might not enable its new Atom core for that market. If that’s the case, where is our 64-core Atom version for HEDT? I’ll take one.

Front End

The big item about the Tremont front end of the core was the move to dual three-wide decode paths, enabling two concurrent streams of decode that could support 3 per cycle. That still remains in Gracemont, but is backed by a double-size 64 KB L1 Instruction cache. This ties into the branch predictor which enables prefetchers at all cache levels, along with a 5000-entry branch target cache which Intel says in improved over the previous generation.

Back on the decoder, Intel supports on-demand decode which stores a history of previous decodes in the instruction cache and if recent misses are recalled at the decode stage, the on-demand stream will pull directly from the instruction cache instead, saving time – if the prefetch/decode works, the content in the instruction cache is updated, however if it is doing poorly then the scope is ‘re-enabled for general fetches’ to get a better understanding of the instruction flow. This almost sounds like a micro-op cache without having a physical cache, but is more to do about instruction streaming. Either way, the decoders can push up to six uops into the second half of the front end.

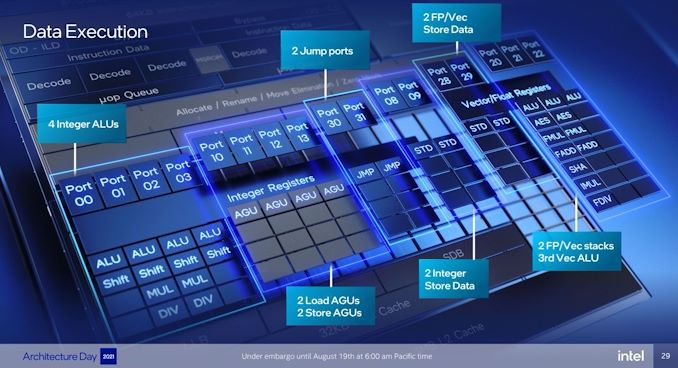

For Gracemont, the reorder buffer size has increased from 208 in Tremont to 256, which is important aspect given that Gracemont now has a total of 17 (!) execution ports, compared to eight in Tremont. This is also significantly wider than the execution capabilities of Golden Cove's 12 ports, related to the disaggregated integer and FP/vector pipeline design. However, despite that width, the allocation stage feeding into the reservation stations can only process five instructions per cycle. On the return path, each core can retire eight instructions per cycle.

Back End

So it’s a bit insane to have 17 execution ports here. There are a lot of repeated units as well, which means that Gracemont is expecting to see repeated instruction use and requires the parallelism to do more per cycle and then perhaps sit idle waiting for the next instructions to come down the pipe. Overall we have

- 4 Integer ALUs (ALU/Shift), two of which can do MUL/DIV

- 4 Address Generator Units, 2 Load + 2 Store

- 2 Branch Ports

- 2 Extra Integer Store ports

- 2 Floating Point/Vector store ports

- 3 Floating Point/Vector ALUs: 3x ALUs, 2x AES/FMUL/FADD, 1x SHA/IMUL/FDIV

It will be interesting to see exactly how many of these can be accessed simultaneously. In previous core designs a lot of this functionality would be enabled though the same port – even Alder Lake’s P-core only has 12 execution ports, with some ports doing double duty on Vector and Integer workloads. In the P-core there is a single scheduler for both types of workloads, whereas in the E-core there are two separate schedulers, which is more akin to what we see on non-x86 core designs. It’s a tradeoff in complexity and ease-of-use.

The back-end is support by a 32 KiB L1-Data cache, which supports a 3-cycle pointer chasing latency and 64 outstanding cache misses. There are two load and two store ports, which means 2x16 byte loads and 2 x 16 byte stores to the L1-D.

There is also has a shared 4 MB L2 cache across all four E-cores in a cluster with 17-cycle latency. The shared L2 cache can support 64 bytes per cycle read/write per core, which Intel states is sufficient for all four cores. The new L2 supports up to 64 outstanding misses to the deeper memory subsystem – which seems fair, but has to be shared amongst the 4 cores.

Intel states that it has a Resource Director that will arbitrate cache accesses between the four cores in a cluster to ensure fairness, confirming that Intel are building these E-cores in for multi-threaded performance rather than latency sensitive scenarios where one thread might have priority.

Other Highlights

As the first Atom core to have AVX2 enabled, there are two vector ports that support FMUL and FADD (port 20 and port 21), which means that we should expect peak performance compared to previous Atoms to be substantial. The addition of VNNI-INT8 over the AVX unit means that Intel wants these E-cores to enable situations where high inference throughput is needed, such as perhaps video analysis.

Intel was keen to point out that Gracemont has all of its latest security features including Control Flow Enhancement Technology (CET), and virtualization redirects under its VT-rp feature.

Overall, Intel stated that the E-cores are tuned for voltage more than anything else (such as performance, area). This means that the E-cores are set to use a lot less power, which may help in mobile scenarios. But as mentioned before on the first page, it will depend on how much power the ring has to consume in that environment - it should be worth noting that each four core Atom cluster only has a single stop on the full ring in Alder Lake, which Intel says should not cause congestion but it is a possibility – if each core is fully loaded, there is only 512 KB of L2 cache per core before making the jump to main memory, which indicates that in a fully loaded scenario, that might be a bottleneck.

223 Comments

View All Comments

blanarahul - Thursday, August 19, 2021 - link

That’s Intel Thread Director. Not Intel Threat Detector, which is what I keep calling it all day, or Intel Threadripper, which I have also heard.never thought my nerdy interest would make me laugh

Hifihedgehog - Thursday, August 19, 2021 - link

One takeaway as far as the rumors we have been operating on are concerned is that Raichu (who has a 90% accuracy rating for his rumors/leaks) would now appear to have been wrong with his recent leak. Sad panda face, I know. I did some analysis elsewhere and here is what I had shared:===

The information released from Intel seems to invalidate this previous rumor above that I shared some weeks ago.

The Core i9 11900K operates at a 5.3 GHz single-core boost and gets a score of 623 in Cinebench R20.

Intel claims a 19% IPC with Golden Cove over Cypress Lake (i.e. Rocket Lake's core microarchitecture). If we see the same single-core boost clock speeds of 5.3 GHz, that would equate to 741. Let's take a huge moment to stare at this astounding achievement. This is nothing to be sneezed at! This puts AMD in a very distant position as far as single-threaded performance is concerned and puts the onus on them to deliver a similar gain with Zen 4. However, switching hats from performance analyst to fact checker, this is in no wise close to the ">810" claim as stated above. To achieve a score of >810, they would need a clock speed of roughly 5.8 GHz (623 points * 1.19 IPC improvement / 5.3 GHz * 5.8 GHz). That, quite frankly, I highly doubt.

===

Link:

http://forum.tabletpcreview.com/threads/intel-news...

That said, though, getting roughly 2/3s of the way there to the rumored performance is still a colossal jump for Intel and is minimally going to have AMD in a rather painful position until Zen 4 comes around.

arayoflight - Thursday, August 19, 2021 - link

The IPC improvement won't be the same as you can see clearly in the intel provided chart. Some workloads will run slower than Cypress cove and some can go as high as 60%.The 19% is an average, indicative figure and is not going to be the same in every benchmark. The >810 might as well be true.

Wrs - Thursday, August 19, 2021 - link

The 19% is a median figure. Based on the graphic from Intel, there are tasks that go over 50% faster and tasks that do about the same if not slower than Cypress Cove. How do we know for certain Cinebench is anywhere close to the median?mattbe - Thursday, August 19, 2021 - link

Where did you see median?/ It says right on the graph that the 19% is a geometric mean.mode_13h - Friday, August 20, 2021 - link

Aside from that, the same point applies. We don't know where Cinebench sits, in the range.Hifihedgehog - Friday, August 20, 2021 - link

As a very standard rule, though, Cinebench (especially the latest R20 and R23 iterations) have tracked very closely to the mean IPC scores that Intel and AMD have advertised for the last few years.mode_13h - Saturday, August 21, 2021 - link

Does R20 use AVX-512, though?Spunjji - Monday, August 23, 2021 - link

@mode_13h: Yes, Cinebench R20 makes use of AVX-512. It's why some of the more AMD-flavoured commentators around the interwebs insisted that R15 was the /correct/ Cinebench to use for comparisons.Personally I think it was a good example of the AVX-512 benefit in the "real world", i.e. it's there but not as substantial as Intel's pet benchmarks imply.

That aside, the lack of AVX-512 on ADL is another data point that suggests Raichu's R20 leak may have been highly optimistic. I always though it sounded a bit much.

mode_13h - Tuesday, August 24, 2021 - link

> Yes, Cinebench R20 makes use of AVX-512.Good to know. Thanks, as always!