A Timely Discovery: Examining Our AMD 2nd Gen Ryzen Results

by Ian Cutress & Ryan Smith on April 25, 2018 11:15 AM ESTForcing HPET On, Plus Spectre and Meltdown Patches

Based on my extreme overclocking roots back in the day, my automated benchmark scripts for the past year or so have forced HPET through the OS. Given that AMD’s guidance is now that it doesn’t matter for performance, and Intel hasn’t even mentioned the issue relating to a CPU review, having HPET enabled was the immediate way to ensure that every benchmark result was consistent, and would not be interfered with by clock drift on special motherboard manufacturer in-OS tweaks. This was a fundamental part of my overclocking roots – if I want to test a CPU, I want to make certainly sure that the motherboard is not causing any issues. It really gets up my nose when after a series of CPU testing, it turns out that the motherboard had an issue – keeping HPET on was designed to stop any timing issues should they arise.

From our results over that time, if HPET was having any effect, it was unnoticed: our results were broadly similar to others, and each of the products fell in line with where they were expected. Over the several review cycles we had, there were a couple of issues that cropped up that we couldn’t explain, such as our Skylake-X gaming numbers that were low, or the first batch of Ryzen gaming tests, where the data was thrown out for being obviously wrong however we never managed to narrow down the issue.

Enter our Ryzen 2000 series numbers in the review last week, and what had changed was the order of results. The way that forcing HPET was affecting results was seemingly adjusted when we bundle in the Spectre and Meltdown patches that also come with their own performance decrease on some systems. Pulling one set of results down further than expected started some alarm bells and needed closer examination.

HPET, by the way it is invoked, is programmed by a memory mapped IO window through the ACPI into the circuit found on the chipset. Accessing it is very much an IO command, and one of the types of commands that fall under the realm of those affected by the Spectre and Meltdown patches. This would imply that any software that required HPET access (or all timing software if HPET is forced) would have the performance reduced even further when these patches are applied, further compounding the issue.

It Affects AMD and Intel Differently: Productivity

So far we have done some quick initial re-testing on the two key processors in this debate, the Ryzen 7 2700X and Intel Core i7-8700K. These are the two most talked about processors at this time, due to the fact that they are closely matched in performance and price, with each one having benefits in certain areas over the other. For our new tests, we have enabled the Spectre/Meltdown patches on both systems – HPET is ‘on’ in the BIOS, but left as ‘default’ in the operating system.

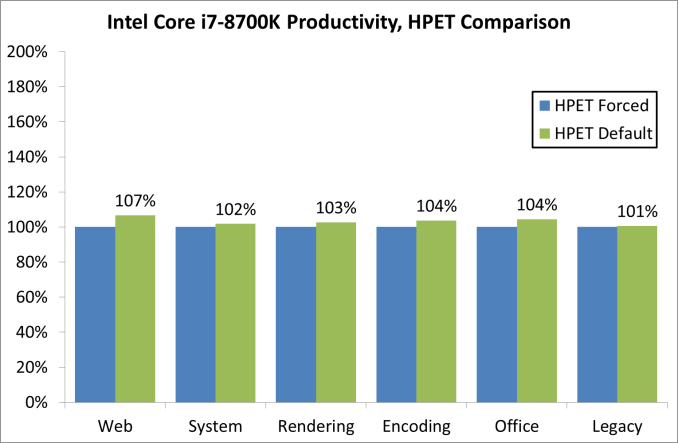

For our productivity tests, on the Intel system, there was an overall +3.3% gain when un-forcing HPET in the OS:

The biggest gains here were in the web tests, a couple of the renderers, WinRAR (memory bound), and PCMark 10. Everything else was pretty much identical. Our compile tests gave us three very odd consecutive numbers, so we are looking at those results separately.

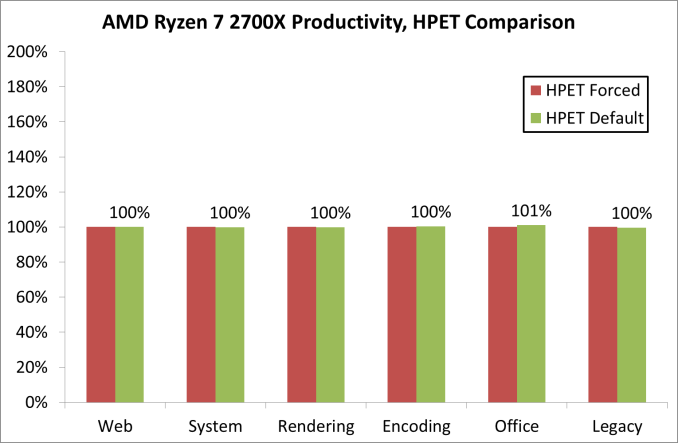

On the AMD system, the productivity tests difference was an overall +0.3% gain when un-forcing HPET in the OS:

This is a lower gain, with the biggest rise coming from PCMark10’s video conference test to the tune of +16%. The compile test results were identical, and a lot of tests were with 1-2%.

If Affects AMD and Intel Differently: Gaming

The bigger changes happen with the gaming results, which is the reason why we embarked on this audit to decipher our initial results. Games rely on timers to ensure data and pacing and tick rates are all sufficient for frames to be delivered in the correct manner – the balance here is between waiting on timers to make sure everything is correct, or merely processing the data and hoping it comes out in more or less the right order: having too fine a control might cause performance delays. In fact, this is what we observe.

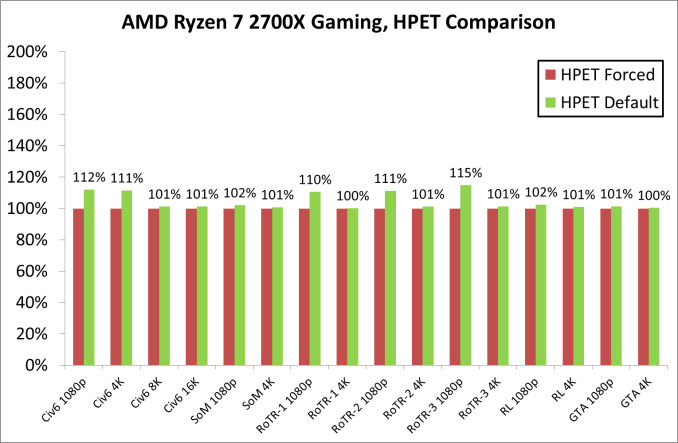

With our GTX 1080 and AMD’s Ryzen 7 2700X, we saw minor gains across the board, however it was clear that 1080p was the main beneficiary over 4K. The 10%+ adjustments came in only Civilization 6 and Rise of the Tomb Raider.

Including the 99th percentile data, removing HPET gave an overall boost of around 4%, however the most gains were limited to specific titles at the smaller resolutions, which would be important for any user relying on fast frame rates at lower resolutions.

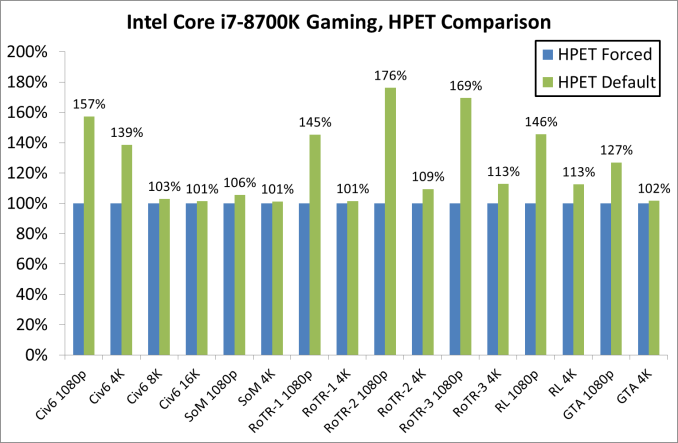

The Intel side of the equation is where it gets particularly messy. We rechecked these results several times, but the data was quite clear.

As with the AMD results, the biggest beneficiaries of disabling HPET were the 1080p tests. Civilization 6 and Rise of the Tomb Raider had substantial performance boosts (also in 4K testing), with Grand Theft Auto observing an additional +27%. By comparison, Shadow of Morder was ‘only’ +6%.

Given that the difference between the two sets of data is related to the timer, one could postulate that the more granular the timer, the more the effect it can have: on both of our systems, the QPC timer is set for 3.61 MHz as a baseline, but the HPET frequencies are quite different. The AMD system has a HPET timer at 14.32 MHz (~4x), while the Intel system has a HPET timer at 24.00 MHz (~6.6x). It is clear that the higher granularity of the Intel timer is causing substantially more pipeline delays – moving from a tick-to-tick delay of 277 nanoseconds to 70 nanoseconds to 41.7 nanoseconds is crossing the boundary from being slower than a CPU-to-DRAM access to almost encroaching on a CPU-to-L3 cache access, which could be one of the reasons for the results we are seeing, along with the nature of how the HPET timer works.

There is also another aspect to gaming that does not appear with standard CPU tests: depending on how the engine is programmed, some game developers like to keep track of a lot of the functions in flight in order to either adjust features on the fly, or for internal metrics. For anyone that has worked extensively on a debug mode and had to churn through the output, it is basically this. If a title had shipped with a number of those internal metrics still running in the background, this is exactly the sort of issue that having HPET enabled could stumble upon - if there is a timing mismatch (based on the way HPET works) and delays are introduced due to these mismatches, it could easily slow down the system and reduce the frame rate.

242 Comments

View All Comments

Dr. Swag - Wednesday, April 25, 2018 - link

It looks like you guys are re running all the benchmarks in the original review then, right? I see that the results look to be changed and less CPUs are on the lists (since you haven't rerun them all, I assume)Ryan Smith - Wednesday, April 25, 2018 - link

Correct. We knew at the start of the Ryzen 2 review what benchmarks and what products we wanted to include; this timer issue hasn't changed that.freaqiedude - Wednesday, April 25, 2018 - link

So would it be fair to say that Intel’s HPET implementation is potentially buggy? It seems to cause a disproportionate performance hit.chrcoluk - Wednesday, April 25, 2018 - link

no its just that TSC + lapic is the way to go, There is a reason thats the default in windows and other modern OS's.DanNeely - Wednesday, April 25, 2018 - link

It suggests that their implementation could probably be made less impactful than it currently is; but that high precision timers have had a performance impact has been known for a long time. In its guise as the multi-media timer in Windows over a decade ago the official MS docs recommended using lesser timing sources in lieu of it whenever possible because it would affect your system.What's new to the general tech site reading public is that there are apparently significant differences in the size of the impact between different CPU families.

Tamz_msc - Wednesday, April 25, 2018 - link

But is there a 'real' performance impact or does default HPET behavior simply introduce a fudge factor that alters how the tools report the numbers? Is there a way to verify the results externally?eddman - Wednesday, April 25, 2018 - link

I'm wondering about the same thing. Do the games' frame rate really change (they get smoother or vice versa) or the timer just messes up the numbers reported by benchmarks and the games' actual frame rate that reaches the display doesn't change?rahvin - Wednesday, April 25, 2018 - link

I'd be more concerned that Intel has found a way to make the timer report false benchmarks that are higher than they actually are. I'd also be curious if the graphics card/cpu combination is potentially at fault.Nvidia has been shown to cheat in the past on benchmarks by turning off features in certain games that are used for benchmarking to boost the score. Is Intel doing something similar?

Rob_T - Wednesday, April 25, 2018 - link

I came across a similar issue on VMware, where a virtual machine's clock would drift out of time synchronisation. The cause of this was that VMWare uses a software based clock and when a host was under heavy CPU load the VM's clock wouldn't get enough CPU resource to keep it updated accurately. This resulted in time running 'slowly' on the virtual machine.Under normal circumstances this kind of time driftissue would be handled by the Network Time Protocol daemon slewing the time back to accuracy; the problem is the maximum slew rate possible is limited to 500 parts-per-million (PPM). Under peak loads we were observing the VM's clock running slow by anywhere up to a third. This far outweighed the ability of the NTP slew mechanism to bring the time back to accuracy.

If this issue has the same root cause, the software based timers would start to run slowly when the system is under heavy load. Therefore more work could be completed in a 'second' due to it's increased duration. It would be interesting to know if the highest discrepancy were also the ones with the largest CPU loads? Looking at the gaming graphs on page 4 the biggest differences are at 1080p which suggests this might be the case.

oleyska - Wednesday, April 25, 2018 - link

You also had Idle issue with windows servers where time would drift., the high load I've never heard of in our company we have thousands upon thousands of vm's using vmware though.