HiSilicon Kirin 970 - Android SoC Power & Performance Overview

by Andrei Frumusanu on January 22, 2018 9:15 AM ESTNPU Performance Tested

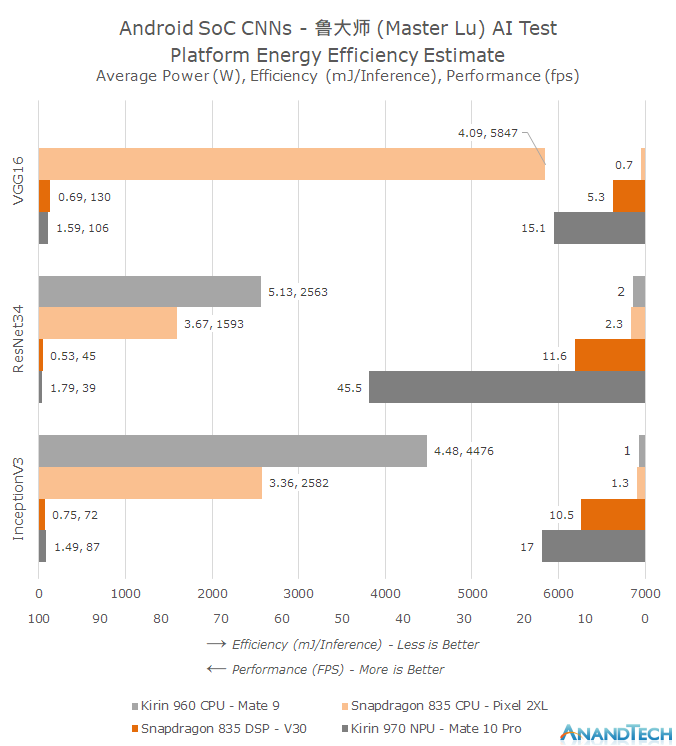

To test the performance of the NPU we need a benchmark which currently targets all of the various vendor APIs. Unfortunately at this stage short of developing our own implementation the choices are scarce, but luckily there is one: Popular Chinese benchmark suite Master Lu recently introduced an AI benchmark implementing both HiSilicon’s HiAI as well as Qualcomm’s SNPE frameworks. The benchmarks implements three different neural network models: VGG16, InceptionV3 as well as ResNet34. The input dataset are 100 images which are a subset of the ImageNet reference database. As a fall-back the app implements the TensorFlow inferencing library to run on the CPU. I’ve ran the performance figures on the Mate 10 Pro, Mate 9 as well as two Snapdragon 835 (Pixel 2 XL & V30) devices respectively running on the CPU as well as the Hexagon DSP.

Similarly to the SPEC2006 results I chose to use a more complex graph to better showcase the three dimensions of average power (W), efficiency (mJ/inference) as well as absolute performance (fps / inferences per second).

First thing we notice from the graph is that we can observe an order of magnitude difference in performance between the NPU and CPU implementations. Running the networks as they are on the CPUs we’re not able to exceed 1-2fps and we do so at very heavy CPU power consumption. Both the Snapdragon 835 as well as the Kirin 960 CPUs struggle with the workloads with average power exceeding sustainable workloads.

Qualcomm’s Hexagon DSP is able to improve on the CPU performance by a factor of 5-8x. But Huawei’s NPU performance figures are again several factors above that, showcasing up to a 4x lead in ResNet34. The reason for the different performance ratio differences between the different models is their design. Convolutional layers are heavily parallelisable whilst the polling and fully connected layers of the models must use more serial processing steps. ResNet in particular makes use of a larger percentage of convolution processing for a single inference and thus is able to achieve a higher utilization rate of the Kirin NPU.

In terms of power efficiency we’re very near to Huawei’s claims of up to a 50x improvement. This is the key characteristic that will enable CNNs to be used in real-world use-cases. I was quite surprised to see Qualcomm’s DSP reach similar efficiency levels as Huawei’s NPU – albeit at 1/3rd to 1/4th of the performance. This should bode quite well in terms of the Snapdragon 845’s Hexagon 685 which promises up to a 3x increase in performance.

I wanted to take the opportunity to make a rant about Google’s Pixel 2: I was able to actually run the benchmark on the Snapdragon 835’s CPU because the Pixel 2 devices lacked support for the SNPE framework. This was in a sense maybe both expected as well as unexpected. With the introduction of the NN API in Android 8.1, which the Pixel 2 phones support and use acceleration through the dedicated Pixel Visual Core SoC, it’s natural that Google would want to push usage of Android’s standard APIs. But on the other hand this is also a limitation on the capabilities of the phone by the OEM vendor which I can’t help but compare to the decision by Google to by default omit OpelCL in Android. This is a decision which in my eyes has heavily stifled the ecosystem and is why we don’t see more GPU accelerated compute workloads, out of which CNNs could have been one.

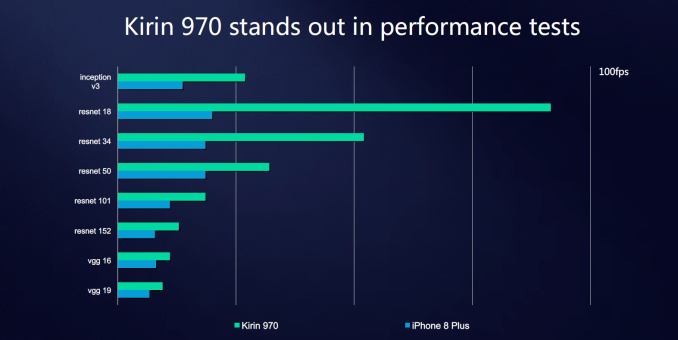

While we can’t run the Master Lu AI test on an iPhone, HiSilicon did publish some slides with reported internally numbers we can try to correlate. Based on the models included in the slide, the Apple A11 neural network IP’s performance should land somewhere slightly ahead of the Snapdragon 835’s DSP but still far behind the Kirin NPU, but again we can't independently verify these figures due to lack of a fitting iOS benchmark we can run ourselves.

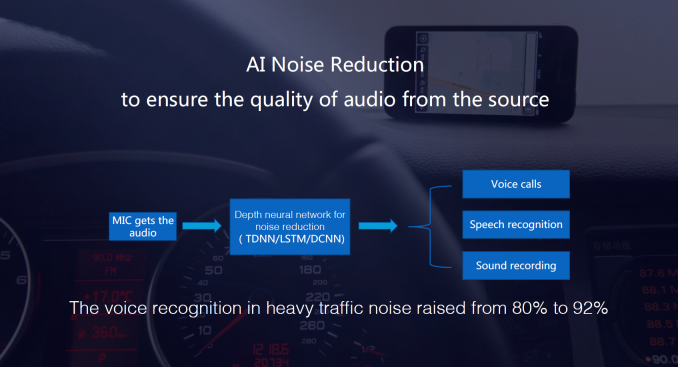

Of course the important question is, what is this all good for? HiSilicon discloses that one use-case being used is noise reduction via CNN processing, and thus is able to increase voice recognition rate in heavy traffic from 80% to 92%.

The other most publicised use-case is the implementation in the camera app. The Mate 10’s camera makes use of the NPU to run inferencing to recognize different scenarios and optimize the camera settings based on pre-sets for those scenarios. The Mate 10 comes with a translation app which was developed with Microsoft, which is able to use the NPU for accelerated offline translation, and this was definitely the single most impressive usage for me. Inside the built-in gallery application we also see the use of image classification to create a new section where pictures are organized by content type. The former scenarios where the SoC is doing live inferencing on a media stream such as the camera feed is also the use-case where HiSilicon has an advantage over Qualcomm as employs both a DSP and the NPU whereas Snapdragon SoCs have to share the DSP resources between vision processing and neural network inferencing workloads.

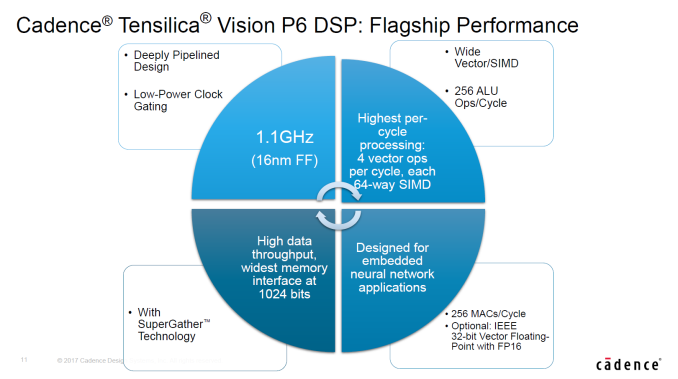

Oddly enough the Kirin 970 has sort of double the silicon IP capable of running neural network efficiently as its vision pipeline also includes a Cadence Tensilica Vision P6 DSP which should be in the same performance class as Qualcomm’s Hexagon 680 DSP, but is currently not exposed for user applications.

While the Mate 10 does make some use of the NPU it’s hard to argue that it’s a definitive differentiating factor for the end-user. Currently neural network usage in mobile doesn’t seem to have the same killer-applications that they have in automotive and security camera sectors. Again this is due to the ecosystem being its early days and the Mate 10 among the first devices to actually offer such a dedicated acceleration block. It’s arguable if it’s worth it for the Kirin 970 to have implemented such a piece and Huawei is very open about the fact that it’s reaching out to developers to try and find more use-cases for the silicon, and at least Huawei should be lauded for innovating with something new.

Huawei/Microsoft's translation app seemed to be the most distinguished experience on the Mate 10 so maybe there’s more non-image based use-cases that can be explored in the future. Currently the app allows the traditional snapshot of a foreign language text and then shows a translated overlay, but imagine a future implementation where it’s able to do it live from the camera feed and allow for an AR experience. MediaTek at CES also showed a distinguishing use-case of using CNNs: for video conferencing the video encoder is fed metadata on scene composition by a CNN layer doing image recognition and telling the encoder to use finer-grained block sizes where a user’s face would be, thus increasing video quality. It’s more likely that neural network use-cases will slowly creep up with time rather than there being a new revolutionary thing, as more devices will start to incorporate such IPs and they become more widespread so will developers be more enticed to find uses for them.

116 Comments

View All Comments

zorxd - Monday, January 22, 2018 - link

Samsung isn't vertically integrated? They also have their own SoC and even fabs (which Huawei and Apple don't)Myrandex - Monday, January 22, 2018 - link

Agreed, but they don't even always use their own components like Huawei and Apple do, I think I've yet to own a Samsung Smartphone that uses a Samsung SoC. I think they will eventually get there though although I can't say I have any idea why they aren't doing it today.jospoortvliet - Saturday, January 27, 2018 - link

Samsung always uses their own SOCs unless they are legally forced not to, like in the US...levizx - Saturday, January 27, 2018 - link

They are DEFINITELY NOT legally forced to use Qualcomm chips in China, especially for the worlds biggest carrier China Mobile. As for the US, if Huawei is not forced to use Qualcomm, I can't imagine why Samsung would unless they signed a deal with Qualcomm - then again that's just by choice.Andrei Frumusanu - Monday, January 22, 2018 - link

Samsung's mobile division (which makes the phones) still makes key use of Snapdragon SoCs for certain markets. Whatever the reason for this and we can argue a lot about it, fact is that the end product more often than not ends up being as the lowest common denominator in terms of features and performance between the two SoC's capabilities. In that sense, Samsung is not vertically integrated and does not control the full stack in the same way Apple and Huawei do.Someguyperson - Monday, January 22, 2018 - link

No, Samsung simply isn't so vain as to use it's own solutions when they are inferior. Samsung skipped the Snapdragon 810 because their chip was much better. Samsung used the 835 instead of their chip last year because the 835 performed nearly exactly the same as the Samsung chip, but was smaller, so they could get more chips out of an early 10 nm process. Huawei chooses their chips so they don't look stupid by making an inferior chip that costs more compared to the competition.Samus - Monday, January 22, 2018 - link

Someguyperson, that isn't the case at all. Samsung simply doesn't use Exynos in various markets for legal reasons. Qualcomm, for example, wouldn't license Exynos for mobile phones as early as the Galaxy S III, which is why a (surprise) Qualcomm SoC was used instead. Samsung licenses Qualcomm's modem IP, much like virtually every SoC designer, for use in their Exynos. The only other option has historically been Intel, who until recently, made inferior LTE modems.I think it's pretty obvious to anybody that if Samsung could, they would, sell their SoC's in all their devices. They might even sell them to competitors, but again, Qualcomm won't let them do that.

lilmoe - Monday, January 22, 2018 - link

Since their Shannon modem integration in the Exynos platform, I struggled to understand why...My best guess would be a bulk deal they made with Qualcomm in order for them to build Snapdragons on both their 14nm and 10nm. Samsung offered a fab deal, Qualcomm agreed to build using Samsung fabs and provide a generous discount in Snapdragon resale for Galaxies, but in the condition to buy a big minimum amount of SoCs. That minimum quantity was more than what was needed for the US market. Samsung did the math, and figured that it was more profitable to keep their fabs ramped up, and save money on LTE volume licensing. So Samsung made a bigger order and included Chinese variants in the bulk.

I believe this is all a bean counter decision, not technical or legal.

KarlKastor - Thursday, January 25, 2018 - link

That's easy to answer. Samus is right, it's a legal problem. The reason is named CDMA2000.Qualcomm owns all IP concerning CDMA2000.

Look at the regions where a Galaxy S is shipped with a Snapdragon and look a the Countries using CDMA2000. That's North America, Chna and Japan.

Samsung has two choices: Using a Snapdragon SoC with integrated QC Modem or plant a dedicated QC Modem alongside their own SoC.

The latter is a bad choice concerning space and i think it's more expensive to buy an extra chip instead of just using a Snapdragon.

I bet all this will end when Verizon quits CDMA2000 in late 2019 and Samsung will use their Exynos SoCs only. CDMA200 is useless since LTE and is just maintained for compatibility reasons.

In all regions not using this crappy network, Samsung uses Exynos SoCs in every phone from low cost to high end.

So of course Samsung IS vertically integrated. Telling something else is pretty ridicoulous.

They have theor own fabs, produce and develope their own SoC, modem, DRAM and NAND Flash and have their own CPU and modem IP. They only lack their own GPU IP.

So who is more vertically integrated?

KarlKastor - Thursday, January 25, 2018 - link

I forgot their own displays and cameras. Especially the first is very important. The fact, that they make their own displays enabled more options in design.Think of their Edge-Displays, you may like them or not, but with them the whole design differed much from their competitors.