Examining Soft Machines' Architecture: An Element of VISC to Improving IPC

by Ian Cutress on February 12, 2016 8:00 AM EST- Posted in

- CPUs

- Arm

- x86

- Architecture

- Soft Machines

- IPC

But 16FF+ Silicon Exists

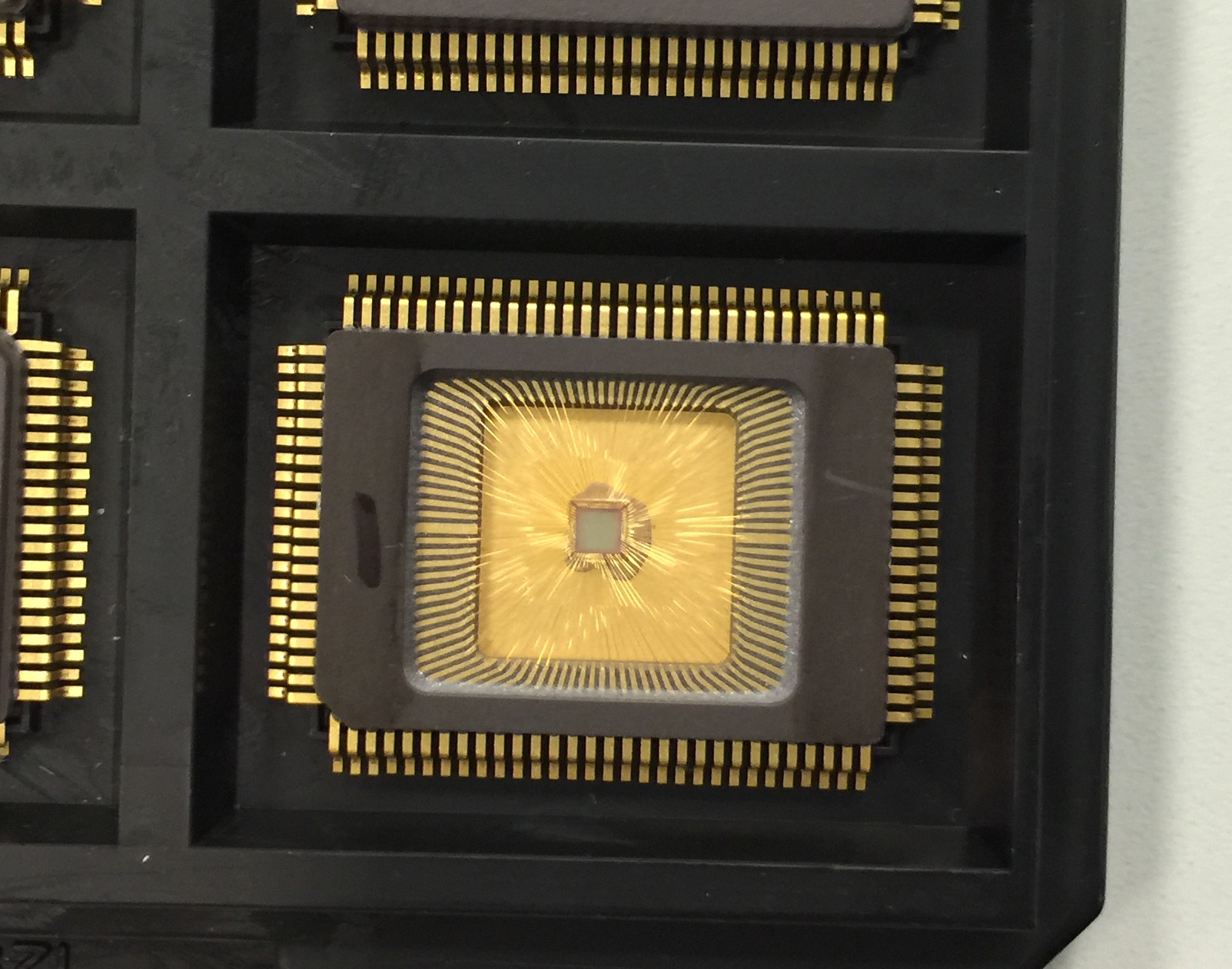

One of the salient points of our talk with Soft Machines was the fact that silicon talks louder than simulations. Their CTO was very honest and said this before I even had the chance to. The 28nm design was shown in 2014 and data was provided, but no 16FF+ design had since been made public. Soft Machines were happy enough to share with us that they do have the core design for 16nm at HQ being examined:

16nm Silicon of a Shasta design

This is literally a test chip of cores rather than a full SoC, and they are currently running the correlation data between simulation and silicon. We were told that the design errors that the 28nm silicon had, such as cache flushing properly, were fixed. The new silicon also includes power plane management, although customers are welcome to use their own power plane adjustments.

The goal, according to Soft Machines' numbers, is to provide a Shasta core on an optimized 16nm FF+ process at 2GHz at around 2W. Their goal includes scaling the design from SoC to server, meaning that there is the goal to reach a range of 0.5W per core up to 5W per core. Because there’s only one 16FF+ part-SoC early run currently at their headquarters it remains to be seen if that is possible, and requires a partner or investor to get their hands dirty with the technology first.

Before someone jumps up and says "is platform XYZ going to use VISC?", it should be fairly obvious from most public roadmaps covering the next 1-2 years that major platforms will not be using VISC. What we see on public roadmaps is a mix of ARM and x86, and the fact that VISC is a different ISA under the hood (which can run native VISC code without translation) means that there has to be an ecosystem change. Soft Machines, with their announcement last week, is at this time principally fishing for clients, investors, and potentially something more.

The big thing about why this design has got a lot of attention in the media and between analysts is because of the potential. Being able to have many light-weight cores that can share resources between threads would be a major milestone in semiconductor design and the next point in the CISC/RISC lineage. It epitomizes the idea of having all the hardware working on a task no matter what it is, such that you can have many slower power efficient cores working on a single task or one inefficient high power but fast core. If you can spare the die area and have a good ISA translation layer, this opens up some of the power budget in a power limited device. A lot of discussion on laptops or smartphones is all about the power, although Soft Machines believes this can impact servers just as easily.

Arguably one could state that future processors will have to do something like VISC in order to get better IPC – when a thread needs a large wide core, then a VISC design can be one when needed. Technically we already have semiconductor designs that work very well on prepared data – vector calculations and graphics are handled by lots of small, simple cores in their thousands. But these only work with consistent data and when the same calculation on all the data points is needed; with a VISC design, the code can be complex with dependencies and the virtual cores will shrink/expand as needed. A lot of questions surrounding the translation layer are to be expected, and if it can be as water-tight as possible when other ISAs are passed through (ARM to VISC, x86 to VISC) and also take advantage of compiler benefits as to SMI’s claims.

As it stands the design promises a lot, but because we really need to see the proper silicon implementation, it might be hard to visualize until a company in the technology ecosystem decides to make that step. It would be an interesting differentiation point for sure, but it requires investment to reach utility in mass production. That makes a number of analysts wary and conservative with good reasons, especially with the assumptions made on that data graph.

Soft Machines has invited us to their offices next time I’m in the Bay Area, which I will probably take them up on.

Sources:

Soft Machines

Microprocessor Report

2014 Linley Conference Video

2015 Linley Conference Video

97 Comments

View All Comments

tipoo - Friday, February 12, 2016 - link

Pretty interesting stuff. As they open up a bit more and provide more data, I'm cautiously letting myself believe there's a possibility of this taking off, but I'm not budging my optimism meter past 1 until this ships and is positively reviewed.I wonder if it's more likely they'll be bought out first. If Intel sees a credible competitor, that's certainly possible.

willis936 - Friday, February 12, 2016 - link

The prospect of actual single threaded performance increases is exactly what the future of computing needs. I'm not as concerned with the existence of the technology as I am the adoption. Competing with intel is more than just making a good processor. This company will have to convince other companies to integrate a Lot of the controllers and interfaces that intel does for them.easp - Friday, February 12, 2016 - link

If they can actually deliver real single-threaded performance increases, the world will beat a path to their door. On-chip peripherals and off-chip interfaces are cookie-cutter in comparison.Xenonite - Wednesday, February 17, 2016 - link

"If they can actually deliver real single-threaded performance increases, the world will beat a path to their door."Sadly, this is not how the semiconductor industry works. AMD could, for instance, DOUBLE their single threaded integer performance by simply tweaking their ZEN design to utilize 4x~5x the current planned TDP of 95W, using a larger die to spread the increased current load over multiple transistors, double their L1 and L2 cache sizes and to add a low-latency Last Level Cache.

If done before tape-out, AMD can work with the foundry to optimize the transistors' characteristics and operating points, which would easily allow for a doubling in single-threaded throughput.

Even if the raw clock-rate couldn't simply be doubled, they could use the additional power budget to run MUCH more aggressive speculative execution, and to widen their superscalar pipeline to be at least as wide the average instruction pipeline length is long.

Since IPC does not need to be tied to instruction latency, each core could easily complete around 5~6 instructions per clock by having, say, 10~12 fully functional superscalar pipelines (each pipeline can complete any instruction indapendently, without having to rely on shared logic blocks) .

Xenonite - Wednesday, February 17, 2016 - link

Sorry, I submitted the post before I was finished. Basically, it boils down to the fact that no one (other than myself XD) would be willing to pay for such a processor. Even if AMD managed to totally thrash Intel in absolute performance, no one will care. And with no mass consumer support, their shareholders would never approve such a project in the first place.The main reason why VISC is doomed to fail, is quite similar: you simply can not attract investors with raw performance in 2016.

Even if they actually had a ~5x single-threaded performance lead over Intel's fastest consumer desktop chips, they STILL wouldn't get the billions of dollars that they need to do a mass market rollout of their arch.

The whole situation is making me really morbid and depressed; what I wouldn't give to go back to the Pentium 3 days.

Demiurge - Friday, February 12, 2016 - link

16-wide ILP isn't going to be a mass-market solution... most designs are barely using 1.5 instructions per cycle, let alone 4. Given the stellar shift to CPU and then GPU based vector processing... I might be missing something here, but I would say that there are already 16-wide ILP on certain specialized operation that actually benefit, such as video processing for example.Incidentally, does anyone remember Transmeta Code-Morphing Software? If not, look it up...

sonicmerlin - Saturday, February 13, 2016 - link

You didn't even read the fracking article.name99 - Saturday, February 13, 2016 - link

What he's saying (perfectly legitimately) is that- there is a LONG history of companies praising to the skies superficially good ideas which actually turned out not to matter much

- VISC's unwillingness to provide SPECInt numbers, even after being so strongly excoriated about this by the entire tech press and academic world, STRONGLY suggests that what they're peddling does not work the way they claim. It likely provides a great speedup for much FP code (speedup which you can also get by using a GPU, the preferred path of traditional companies), and very little speedup for standard integer code.

The speed at which they claim they can execute also makes one wonder. Even Apple (likely right now the best funded CPU design-house out there, with the simplest target in their sights, working on more or less traditional designs) aims for a major core every two years, with a minor upgrade in between. These guys, with vastly fewer engineers and money, and trying to do something more innovative, believe they can spin a major upgrade every year...

That seems extremely unlikely, so the only real question is: they bullsh*tting only the press/their investors, or are they also bullsh&tting themselves?

Samus - Monday, February 15, 2016 - link

The parallels with Transmetta ring a bell with me, too, and yes, I did read the article. I'm inclined to have a immature capitalist response to things like this, specifically: if a company as big as Intel, with some of the best engineers in the world who are often open to radical ideas, haven't bothered trying an instruction decoder, it is likely because the pros did not outweigh the cons. After all, Jackson technology (hyper threading) is some form of what's going on here, just not targeting specific requests.Azethoth - Wednesday, February 17, 2016 - link

Agreed, and reading the list of unanswered questions it sounds a lot like they are trying to look good in very specific circumstances, rather than being naturally best in class. The competition is GPU + CPU cores. Unless you prove superiority despite all the tricks the competition has available you cannot succeed. What they propose sounds like it needs to break down the normal inter core separation that lets them operate independently so that they can realize single threaded speedup. I am not an EE, so I assume it is at least possible. I am not sure it can be done practically though.