NVIDIA Tegra X1 Preview & Architecture Analysis

by Joshua Ho & Ryan Smith on January 5, 2015 1:00 AM EST- Posted in

- SoCs

- Arm

- Project Denver

- Mobile

- 20nm

- GPUs

- Tablets

- NVIDIA

- Cortex A57

- Tegra X1

GPU Performance Benchmarks

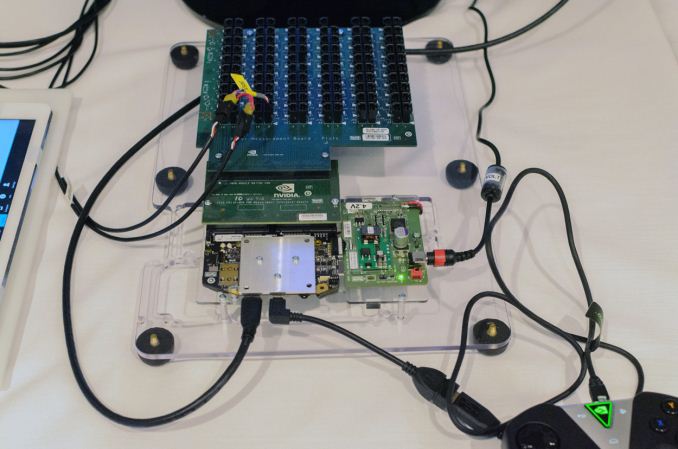

As part of today’s announcement of the Tegra X1, NVIDIA also gave us a short opportunity to benchmark the X1 reference platform under controlled circumstances. In this case NVIDIA had several reference platforms plugged in and running, pre-loaded with various benchmark applications. The reference platforms themselves had a simple heatspreader mounted on them, intended to replicate the ~5W heat dissipation capabilities of a tablet.

The purpose of this demonstration was two-fold. First to showcase that X1 was up and running and capable of NVIDIA’s promised features. The second reason was to showcase the strong GPU performance of the platform. Meanwhile NVIDIA also had an iPad Air 2 on hand for power testing, running Apple’s latest and greatest SoC, the A8X. NVIDIA has made it clear that they consider Apple the SoC manufacturer to beat right now, as A8X’s PowerVR GX6850 GPU is the fastest among the currently shipping SoCs.

It goes without saying that the results should be taken with an appropriate grain of salt until we can get Tegra X1 back to our labs. However we have seen all of the testing first-hand and as best as we can tell NVIDIA’s tests were sincere.

| NVIDIA Tegra X1 Controlled Benchmarks | |||||

| Benchmark | A8X (AT) | K1 (AT) | X1 (NV) | ||

| BaseMark X 1.1 Dunes (Offscreen) | 40.2fps | 36.3fps | 56.9fps | ||

| 3DMark 1.2 Unlimited (Graphics Score) | 31781 | 36688 | 58448 | ||

| GFXBench 3.0 Manhattan 1080p (Offscreen) | 32.6fps | 31.7fps | 63.6fps | ||

For benchmarking NVIDIA had BaseMark X 1.1, 3DMark Unlimited 1.2 and GFXBench 3.0 up and running. Our X1 numbers come from the benchmarks we ran as part of NVIDIA’s controlled test, meanwhile the A8X and K1 numbers come from our Mobile Bench.

NVIDIA’s stated goal with X1 is to (roughly) double K1’s GPU performance, and while these controlled benchmarks for the most part don’t make it quite that far, X1 is still a significant improvement over K1. NVIDIA does meet their goal under Manhattan, where performance is almost exactly doubled, meanwhile 3DMark and BaseMark X increased by 59% and 56% respectively.

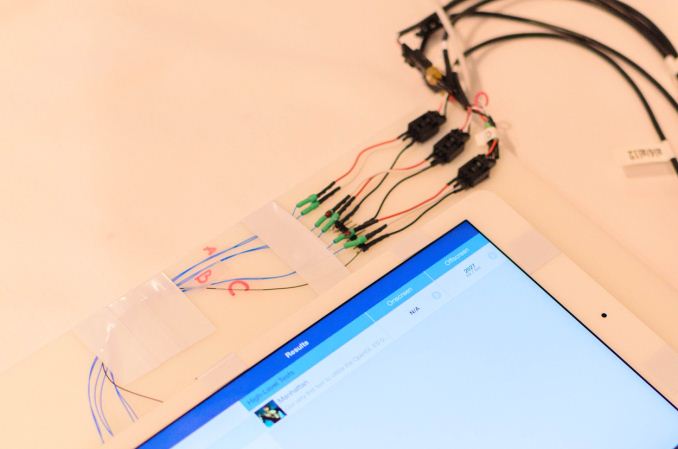

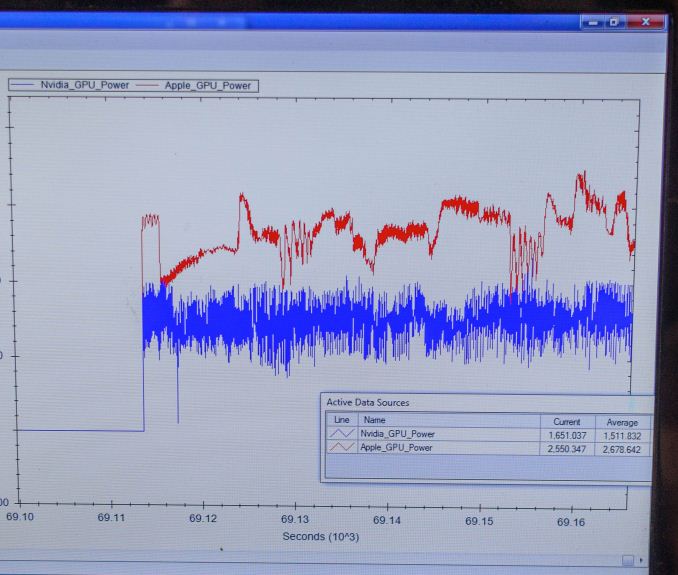

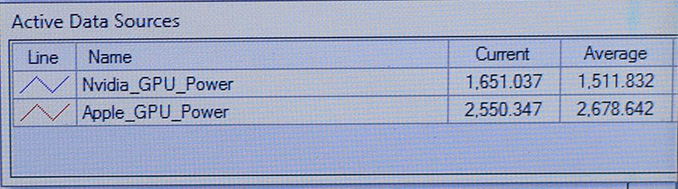

Finally, for power testing NVIDIA had an X1 reference platform and an iPad Air 2 rigged to measure the power consumption from the devices’ respective GPU power rails. The purpose of this test was to showcase that thanks to X1’s energy optimizations that X1 is capable of delivering the same GPU performance as the A8X GPU while drawing significantly less power; in other words that X1’s GPU is more efficient than A8X’s GX6850. Now to be clear here these are just GPU power measurements and not total platform power measurements, so this won’t account for CPU differences (e.g. A57 versus Enhanced Cyclone) or the power impact of LPDDR4.

Top: Tegra X1 Reference Platform. Bottom: iPad Air 2

For power testing NVIDIA ran Manhattan 1080p (offscreen) with X1’s GPU underclocked to match the performance of the A8X at roughly 33fps. Pictured below are the average power consumption (in watts) for the X1 and A8X respectively.

NVIDIA’s tools show the X1’s GPU averages 1.51W over the run of Manhattan. Meanwhile the A8X’s GPU averages 2.67W, over a watt more for otherwise equal performance. This test is especially notable since both SoCs are manufactured on the same TSMC 20nm SoC process, which means that any performance differences between the two devices are solely a function of energy efficiency.

There are a number of other variables we’ll ultimately need to take into account here, including clockspeeds, relative die area of the GPU, and total platform power consumption. But assuming NVIDIA’s numbers hold up in final devices, X1’s GPU is looking very good out of the gate – at least when tuned for power over performance.

194 Comments

View All Comments

harrybadass - Monday, January 5, 2015 - link

Nvidia X1 is somehow already obsolete when compared to A8x.GXA6850

Clusters 8

FP32 ALUs 256

FP32 FLOPs/Clock 512

FP16 FLOPs/Clock 1024

Pixels/Clock (ROPs) 16

Texels/Clock 16

psychobriggsy - Monday, January 5, 2015 - link

NVIDIA are claiming power savings compared to the A8X, at the same performance level.And additionally, they can run the X1 GPU at ~1GHz to achieve greater performance than the A8X. However the A8X's lower GPU clock is just a design decision by Apple so they can guarantee battery life isn't sucky when playing games.

But yet, hardware-wise the X1's GPU specification isn't that amazing when compared to the A8X's GPU.

Last up, how does a quad-A57 at 2+ GHz compare to a dual 1.5GHz Cyclone...

techconc - Monday, January 5, 2015 - link

Isn't always amazing how company A's future products compete so well against company B's current products? The X1 won't be competing with the A8X, it will be competing against the A9X. If you're familiar with the PowerVR Rogue 7 series GPUs, you'd wouldn't be terribly impressed with this recent nVidia announcement. It keeps them in the game as a competitor, but they will not be on top. Further, I'm quite certain that Apple's custom A9 chip will compare well to the off the shelf reference designs in the A57 in terms of performance, efficiency or both. If there were no benefits to Apple's custom design, they would simply use the reference designs as nVidia has chosen to do.Yojimbo - Monday, January 5, 2015 - link

Yes but how do you compare your product to something that isn't out yet? You can't test it against rumors. It must be compared with the best of what is out there and then one must judge if the margin of improvement over the existing product is impressive or not. The PowerVR Rogue 7 series is due to be in products when? I doubt it will be any time in 2015 (maybe I'm wrong). When I read the Anandtech article on the details of IMG's upcoming architecture a few months back I had a feeling they were trying to set themselves up as a takeover target. I don't remember exactly why but it just struck me that way. I wonder if anyone would want to risk taking them over while this NVIDIA patent suit is going on, however.OreoCookie - Tuesday, January 6, 2015 - link

The Tegra X1 isn't out yet either!If you look at Apple's product cycle it's clear that in the summer Apple will release an A9 when they launch the new iPhone. And you can look at Apple's history to estimate the increase in CPU and GPU horsepower.

Yojimbo - Tuesday, January 6, 2015 - link

But NVIDIA HAS the Tegra X1. They are the ones making the comparisons and the Tegra X1 is the product which they are comparing! Apple seems to be releasing their phones in the fall recently, but NVIDIA nor the rest of the world outside Apple and their partners has no idea what the A9 is like and so it can't be used for a comparison! It's the same for everyone. When Qualcomm announced the Snapdragon 810 in April of 2014 they couldn't have compared it to the Tegra X1, even though that's what it will end up competing with for much of its life cycle.Yojimbo - Monday, January 5, 2015 - link

Perhaps those are the raw max-throughput numbers, but if it were that simple there would be no reason for benchmarks. Now let's see how they actually perform.edzieba - Monday, January 5, 2015 - link

12 cameras at 720p120?! VERY interested in DRIVE PX, even if it'd never end up near a car.ihakh - Monday, January 5, 2015 - link

about the intel chip I have to say that it is a very good CPU (think about sse and avx) + a little GPUbut nvidia chip is a good GPU+ reasonable CPU

you can have windows x86 on intel chip and run something like MATLAB (also android)

and you can have a good gaming experience with nvidia's

each of them has its use for certain users

its not like that every program can use 1TFLOPS of tegra GPU

and its not like every user is "game crazy"

intel core M have its own users

and of course tegra chip is very hot for mobiles and it is a hard decision for engineers who design mobiles and tablet to migrate from a known chip like snapdragon to an unknown and new chip like tegra

I think both nvidia and intel are doing good and nor deserve blaming

but it is a good idea for nvidia to make a cooler chip for mobiles

Morawka - Monday, January 5, 2015 - link

So compared to the K1 it's twice as fast, And it also uses Twice as less energy.So does that mean it will still be a 7w SOC? albeit twice as fast.