Intel Unveils Lunar Lake Architecture: New P and E cores, Xe2-LPG Graphics, New NPU 4 Brings More AI Performance

by Gavin Bonshor on June 3, 2024 11:00 PM ESTNew NPU: Intel NPU 4, Up to 48 Peak TOPS

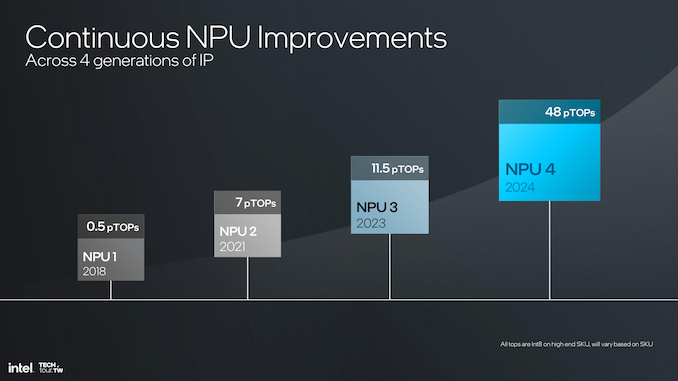

Perhaps Intel's main focal point, from a marketing point of view, is the latest generational change to its Neural Processing Unit or NPU.Intel has made some significant breakthroughs with its latest NPU, aptly called NPU 4. Although AMD disclosed a faster NPU during their Computex keynote, Intel claims up to 48 TOPS of peak AI performance.NPU 4, compared with the previous model, NPU 3, is a giant leap in enhancing power and efficiency in neural processing. The improvements in NPU 4 have been made possible by achieving higher frequencies, better power architectures, and a higher number of engines, thus giving it better performance and efficiency.

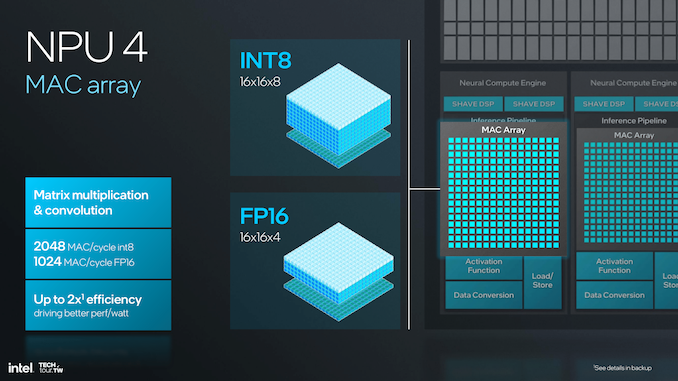

In NPU 4, these improvements are enhanced in vector performance architecture, with higher numbers of compute tiles and better optimality in matrix computations.This incurs a great deal of neural processing bandwidth; in other words, it is critical for applications that demand ultra-high-speed data processing and real-time inference. The architecture supports INT8 and FP16 precisions, with a maximum of 2048 MAC (multiply-accumulate) operations per cycle for INT8 and 1024 MAC operations for FP16, clearly showing a significant increase in computational efficiency.

A more in-depth look at the architecture reveals increased layering in the NPU 4. Each of the neural compute engines in this 4th version has an incredibly excellent inference pipeline embedded — comprising MAC arrays and many dedicated DSPs for different types of computing. The pipeline is built for numerous parallel operations, thus enhancing performance and efficiency. The new SHAVE DSP is optimized to four times the vector compute power it had in the previous generation, enabling more complex neural networks to be processed.

A significant improvement of NPU 4 is an increase in clock speed and introducing a new node that doubles the performance at the same power level as NPU 3. This results in peak performance quadrupling, making NPU 4 a powerhouse for demanding AI applications. The new MAC array features advanced data conversion capabilities on a chip, which allow for a datatype conversion on the fly, fused operations, and layout of the output data to make the data flow optimal with minimal latency.

The bandwidth improvements in NPU 4 are essential to handle bigger models and data sets, especially in transformer language model-based applications. The architecture supports higher data flow, thus reducing the bottleneck and ensuring it runs smoothly even when in operation. The DMA (Direct Memory Access) engine of NPU 4 doubles the DMA bandwidth—an essential addition in improving network performance and an effective handler of heavy neural network models. More functions, including embedding tokenization, are further supported, expanding the potential of what NPU 4 can do.

The significant improvement of NPU 4 is in the matrix multiplication and convolutional operations, whereby the MAC array can process up to 2048 MAC operations in a single cycle for INT8 and 1024 for FP16. This, in turn, makes an NPU capable of processing much more complex neural network calculations at a higher speed and lower power. That makes a difference in the dimension of the vector register file; NPU 4 is 512-bit wide. This implies that in one clock cycle, more vector operations can be done; this, in turn, carries on the efficiency of the calculations.

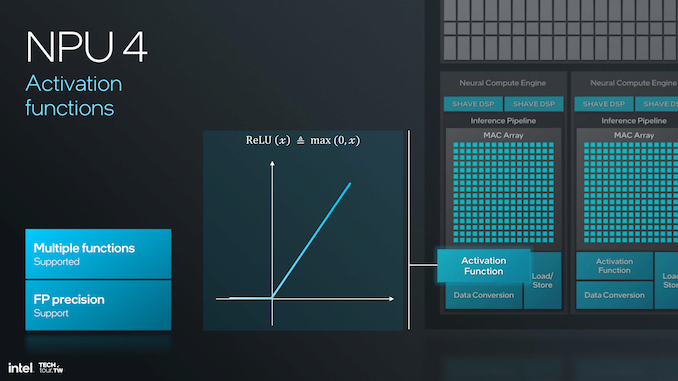

NPU 4 supports activation functions and a wider variety is available now that supports and treats any neural network, with the choice of precision to support the floating-point calculations, which should make the computations more precise and reliable. Improved activation functions and an optimized pipeline for inference will empower it to do more complicated and nuanced neuro-network models with much better speed and accuracy.

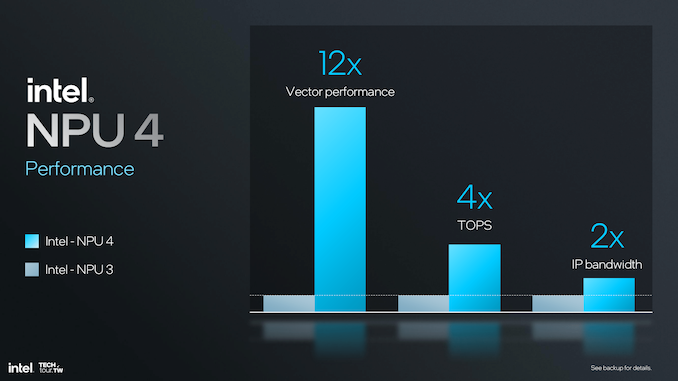

Upgrading to SHAVE DSP within NPU 4, with four times the vector compute power compared to NPU 3, will bring a 12x overall increase in vector performance. This would be most useful for transformer and large language model (LLM) performance, making it more prompt and energy efficient. Increasing vector operations per clock cycle enables the larger vector register file size, which significantly boosts the computation capabilities of NPU 4.

In general, NPU 4 presents a big performance jump over NPU 3, with 12 times vector performance, four times TOPS, and two times IP bandwidth. These improvements make NPU 4 a high-performing and efficient fit for up-to-date AI and machine learning applications where performance and latency are critical. These architectural improvements, along with steps in data conversion and bandwidth improvements, make NPU 4 the top-of-the-line solution for managing very demanding AI workloads.

91 Comments

View All Comments

mode_13h - Thursday, June 6, 2024 - link

The way I see it, the only defense Intel has for comparing Skymont to the LP Crestmont cores is to defend their decision not to include a separate LP version of Skymont, in Lunar Lake.In fact, I'll bet what happened is that someone internally made this pitch and the marketing goon who produced the public-facing slides for Lunar Lake opted to reuse that data, since it made Skymont look even better (it's already quite impressive)! Reply

kwohlt - Tuesday, June 4, 2024 - link

The MTL E cores shared a ringbus with the P cores. The LNL E cores are completely separated from the P cores and function much more similarly to current LP-E cores Replyname99 - Wednesday, June 5, 2024 - link

So how much faster are they than the MTL E-cores (as opposed to the LP-E cores)?Sure it's nice that the dumbness of MTL is fixed, but the question is the one I'm interested in. Reply

mode_13h - Tuesday, June 4, 2024 - link

So is L0D just a new name for what they previously called L1D? The two seem virtually identical, at least in terms of the information they disclosed. Replymode_13h - Tuesday, June 4, 2024 - link

The thing they're *now* calling L1D is what seems to be the new part. ReplyDante Verizon - Tuesday, June 4, 2024 - link

AMD will be swimming ahead, how pathetic. Replylmcd - Monday, June 17, 2024 - link

AMD doesn't build a package that competes with this product. If Intel delivers with Xe2 (and there's no reason to believe they will, to be clear), this product would win the entire handheld gaming category for the generation in about 30 seconds flat. Lunar Lake wouldn't actually be impossible to stuff into a phablet-style phone, though it obviously wouldn't be easy. Replykkilobyte - Tuesday, June 4, 2024 - link

what about the i9-14900KS test redo with Intel Default settings? You told us 20 days ago that you'd redo them :Gavin Bonshor - Friday, May 10, 2024 - link

Don't worry; I will be testing Intel Default settings, too. I'm testing over the weekend and adding them in.

So, will this promise be ever fullfilled? Reply

kn00tcn - Tuesday, June 4, 2024 - link

are you confirming that he chart images have not changed or were you waiting for an announcement? Replykkilobyte - Tuesday, June 4, 2024 - link

Unless I'm mistaken, the charts don't seem to have changed, and include only a single set of data (without the Intel Default Settings). The text doesn't suggest they were, though I didn't read the whole article again, I admit. Reply