ATI's New High End and Mid Range: Radeon X1950 XTX & X1900 XT 256MB

by Derek Wilson on August 23, 2006 9:52 AM EST- Posted in

- GPUs

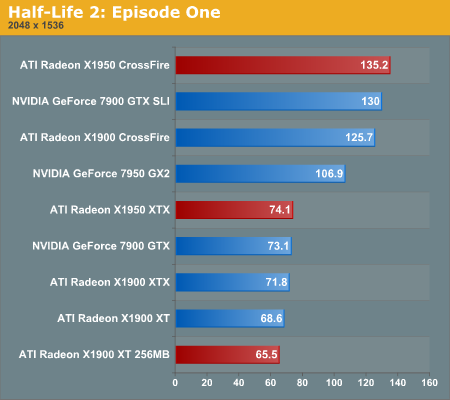

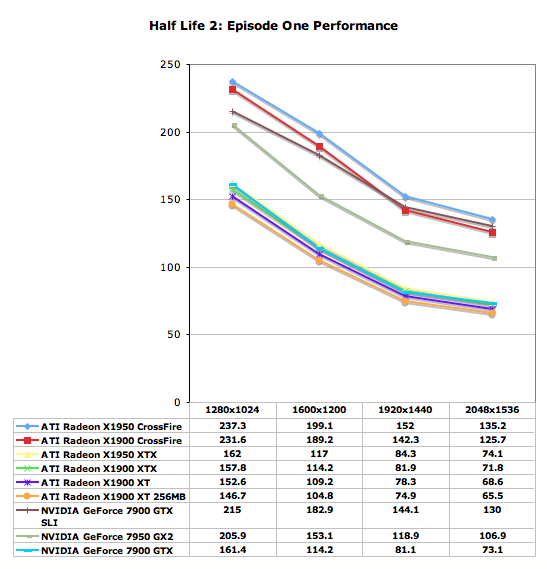

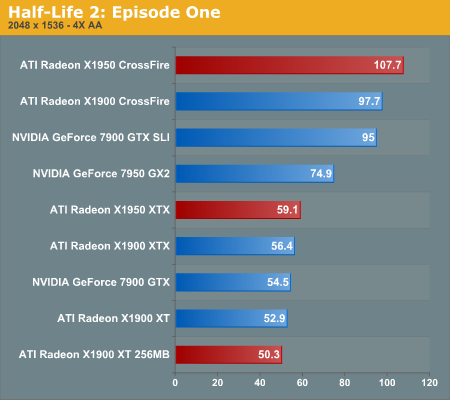

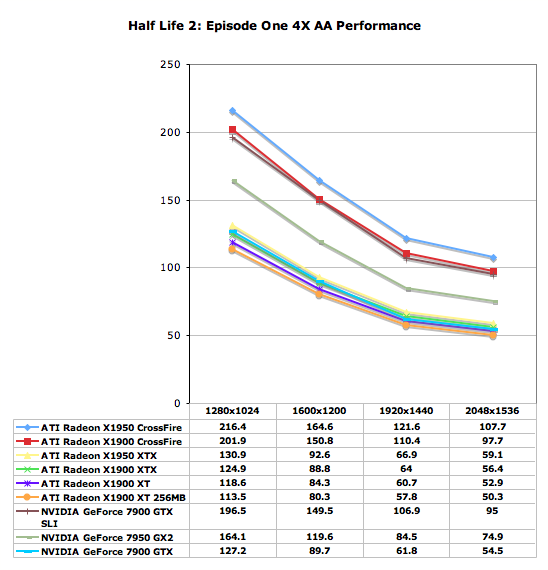

Half-Life 2: Episode One Performance

Episode One of the new Half-Life 2 series makes use of recent Source engine updates to include Valve's HDR technology. While some people have done HDR that won't allow antialiasing (even on ATI cards), Valve put a high value on building an HDR implementation that everyone can use with whatever settings they want. Consistency of experience is usually not important enough to developers who care about pushing the bleeding edge of technology, so we are very happy to see Valve going down this path.

We use the built-in timedemo feature to benchmark the game. Our timedemo consists of a protracted rocket launcher fight and features much debris and pyrotechnics. The source engine timedemo feature is more like the nettimedemo of Id's Doom 3 engine, in that it plays back more than just the graphics. In fact, Valve includes some fairly intensive diagnostic tools that will reveal almost everything about every object in a scene. We haven't found a good use for this in the context of reviewing computer hardware, but our options are always open.

The highest visual quality settings possible were used including the "reflect all" setting which is normally not enabled by default. Antialiasing was left disabled for this test, and anisotropic filtering was set at 8x. While the Source engine is notorious for giving great framerates for almost any hardware setup, we find the game isn't as enjoyable if it isn't running at at least 30fps. This is very attainable even at the highest resolution we tested on most cards, and thus our target framerate is a little higher in this game than others.

Most of the solutions scale the same in Half-Life 2: Episode 1, with the possible exception of the 7900 GTX SLI setup hitting a bit of an NVIDIA driver inspired CPU limitation at 1280x1024. We can't really complain, as scoring over 200 fps is really an accomplishment in itself. With scores like these across the board, there's no reason not to run with AA enabled.

With even the slowest tested solution offering over 50 FPS at 2048x1536 4xAA, gamers playing HL2 variants can run with any of the high-end GPU solutions without problem. ATI does manage to claim a ~10% performance victory with the X1950 CrossFire over the 7900 GTX SLI, so if the pattern holds in future episodes ATI will be a slightly faster solution. The X1900 CrossFire configuration was also slightly faster than the SLI setup, though for all practical purposes that matchup is a tie.

74 Comments

View All Comments

JarredWalton - Wednesday, August 23, 2006 - link

We used factory overclocked 7900 GT cards that are widely available. These are basically guaranteed overclocks for about $20 more. There are no factory overclocked ATI cards around, but realistically don't expect overclocking to get more than 5% more performance on ATI hardware.The X1900 XTX is clocked at 650 MHz, which isn't much higher than the 625 MHz of the XT cards. Given that ATI just released a lower power card but kept the clock speed at 650 MHz, it's pretty clear that there GPUs are close to topped out. The RAM might have a bit more headroom, but memory bandwidth already appears to be less of a concern, as the X1950 isn't tremendously faster than the X1900.

yyrkoon - Wednesday, August 23, 2006 - link

I think its obvious why ATI is selling thier cards for less now, and that reason is alot of 'tech savy' users, are waiting for Direct3D 10 to be released, and want to buy a capable card. This is probably to try an entice some people into buying technology that will be 'obsolete', when Direct3D 10 is released.Supposedly Vista will ship with Directx 9L, and Directx 10 (Direct3D 10), but I've also read to the contrary, and that Direct3D 10 wont be released until after Vista ships (sometime). Personally, I couldnt think of a better time to buy hardware, but alot of people think that waiting, and just paying through the nose for a Video card later, is going to save them money. *shrug*

Broken - Wednesday, August 23, 2006 - link

In this review, the test bed was an Intel D975XBX (LGA-775). I thought this was an ATI Crossfire only board and could not run two Nvidia cards in SLI. Are there hacked drivers that allow this, and if so, is there any penalty? Also, I see that this board is dual 8x pci-e and not dual 16x... at high resolutions, could this be a limiting factor, or is that not for another year?DerekWilson - Wednesday, August 23, 2006 - link

Sorry about the confusion there. We actually used an nForce4 Intel x16 board for the NVIDIA SLI tests. Unfortunately, it is still not possible to run SLI on an Intel motherboard. Our test section has been updated with the appropriate information.Thanks for pointing this out.

Derek Wilson

ElFenix - Wednesday, August 23, 2006 - link

as we all should know by now, Nvidia's default driver quality setting is lower than ATi's, and makes a significant difference in the framerate when you use the driver settings to match the quality settings. your "The Test" page does not indicate that you changed the driver quality settings to match.DerekWilson - Wednesday, August 23, 2006 - link

Drivers were run with default quality settings.Default driver settings between ATI and NVIDIA are generally comparable from an image quality stand point unless shimmering or banding is noticed due to trilinear/anisotropic optimizations. None of the games we tested displayed any such issues during our testing.

At the same time, during our Quad SLI followup we would like to include a series of tests run at the highest possible quality settings for both ATI and NVIDIA -- which would put ATI ahead of NVIDIA in terms of Anisotropic filtering or in chuck patch cases and NVIDIA ahead of ATI in terms of adaptive/transparency AA (which is actually degraded by their gamma correction).

If you have any suggestions on different settings to compare, we are more than willing to run some tests and see what happens.

Thanks,

Derek Wilson

ElFenix - Wednesday, August 23, 2006 - link

could you run each card with the quality slider turned all the way up, please? i believe that the the default setting for ATi, and the 'High Quality' setting for nvidia. someone correct me if i'm wrong.thanks!

michael

yyrkoon - Wednesday, August 23, 2006 - link

I think as long as all settings from both offerings are as close as possible per benchmark, there is no real gripe.Although, some people seem to think it nessisary to run AA as high resolutions (1600x1200 +), but I'm not one of them. Its very hard for me to notice jaggies even at 1440x900, especially when concentrating on the game, instead of standing still, and looking with a magnifying glass for jaggies . . .

mostlyprudent - Wednesday, August 23, 2006 - link

When are we going to see a good number of Core 2 Duo motherboards that support Crossfire? The fact that AT is using an Intel made board rather than a "true enthusiast" board says something about the current state of Core 2 Duo motherboards.DerekWilson - Wednesday, August 23, 2006 - link

Intel's boards are actually very good. The only reason we haven't been using them in our tests (aside from a lack of SLI support) is that we have not been recommending Intel processors for the past couple years. Core 2 Duo makes Intel CPUs worth having, and you definitely won't go wrong with a good Intel motherboard.