The 64 Core Threadripper 3990X CPU Review: In The Midst Of Chaos, AMD Seeks Opportunity

by Dr. Ian Cutress & Gavin Bonshor on February 7, 2020 9:00 AM ESTAMD 3990X Against Prosumer CPUs

The first set of consumers that will be interested in this processor will be those looking to upgrade into the best consumer/prosumer HEDT package available on the market. The $3990 price is a high barrier to entry, but these users and individuals can likely amortize the cost of the processor over its lifetime. To that end, we’ve selected a number of standard HEDT processors that are near in terms of price/core count, as well as putting in the 8-core 5.0 GHz Core i9-9900KS and the 28-core unlocked Xeon W-3175X.

| AMD 3990X Consumer Competition | ||||||

| AnandTech | AMD 3990X |

AMD 3970X |

Intel 3175X |

Intel i9- 10980XE |

AMD 3950X |

Intel 9900KS |

| SEP | $3990 | $1999 | $2999 | $979 | $749 | $513 |

| Cores/T | 64/128 | 32/64 | 28/56 | 18/36 | 16/32 | 8/16 |

| Base Freq | 2900 | 3700 | 3100 | 3000 | 3500 | 5000 |

| Turbo Freq | 4300 | 4500 | 4300 | 4800 | 4700 | 5000 |

| PCIe | 4.0 x64 | 4.0 x64 | 3.0 x48 | 3.0 x48 | 4.0 x24 | 3.0 x16 |

| DDR | 4x 3200 | 4x 3200 | 6x 2666 | 4x 2933 | 2x 3200 | 2x 2666 |

| Max DDR | 512 GB | 512 GB | 512 GB | 256 GB | 128 GB | 128 GB |

| TDP | 280 W | 280 W | 255 W | 165 W | 105 W | 127 W |

The 3990X is beyond anything in price at this level, and even at the highest consumer cost systems, $1000 could be the difference between getting two or three GPUs in a system. There has to be big upsides here moving from the 32 core to the 64 core.

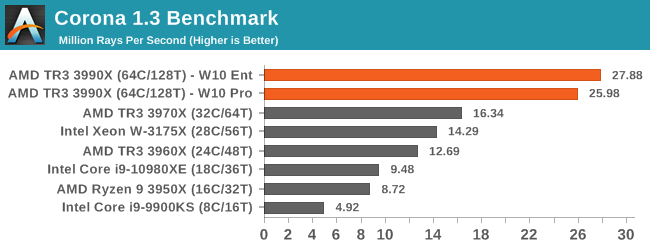

Corona is a classic 'more threads means more performance' benchmark, and while the 3990X doesn't quite get perfect scaling over the 32 core, it is almost there.

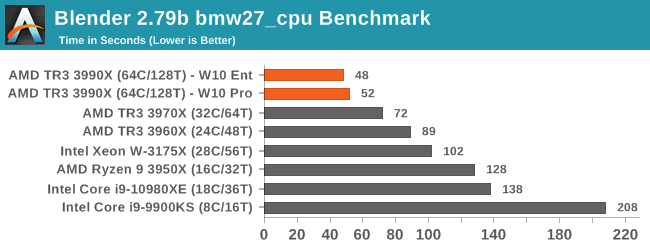

The 3990X scores new records in our Blender test, with sizeable speed-ups against the other TR3 hardware.

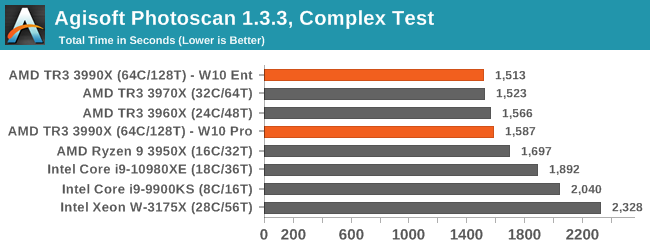

Photoscan is a variable threaded test, and the AMD CPUs still win here, although 24 core up to 64 core all perform within about a minute of each other in this 20 minute test. Intel's best consumer hardware is a few minutes behind.

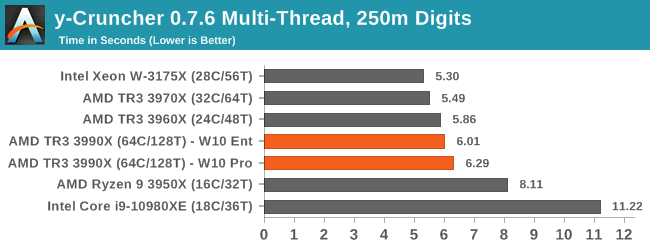

y-cruncher is an AVX-512 accelerated test, and so Intel's 28-core with AVX-512 wins here. Interestingly the 128 cores of the 3990X get in the way here, likely the spawn time of so many threads is adding to the overall time.

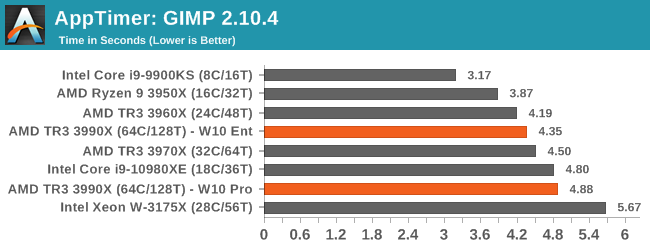

GIMP is a single threaded test designed around opening the program, and Intel's 5.0 GHz chip is the best here. the 64 core hardware isn't that bad here, although the W10 Enterprise data has the better result.

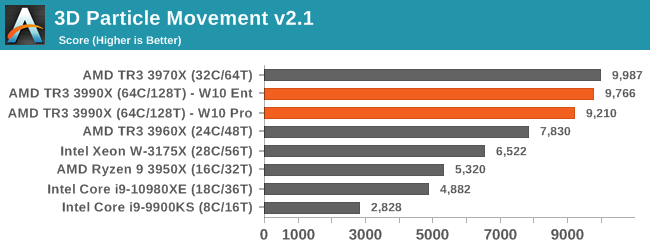

Without any hand tuned code, between 32 core and 64 core workloads on 3DPM, there's actually a slight deficit on 64 core.

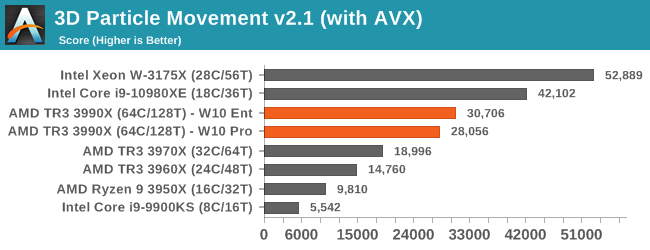

But when we crank in the hand tuned code, the AVX-512 CPUs storm ahead by a considerable margin.

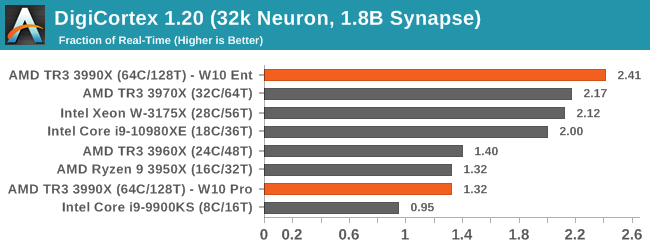

We covered Digicortex on the last page, but it seems that the different thread groups on W10 Pro is holidng the 3990X back a lot. With SMT disabled, we score nearer 3x here.

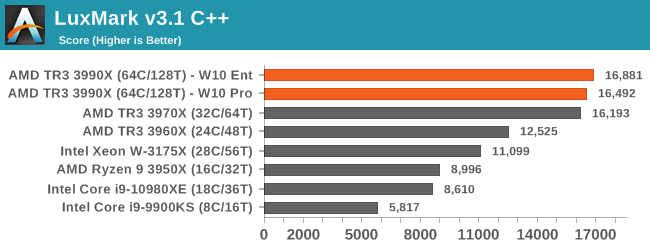

Luxmark is an AVX2 accelerated program, and having more cores here helps. But we see little gain from 32C to 64C.

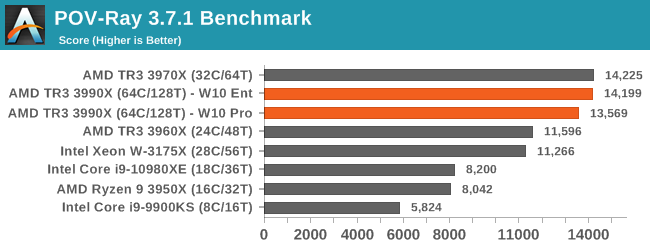

As we saw on the last page, POV-Ray preferred having SMT off for the 3990X, otherwise there's no benefit over the 32-core CPU.

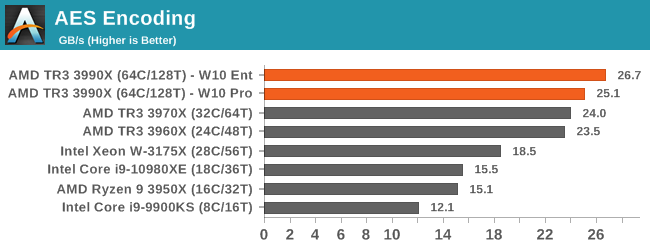

AES gets a slight bump over the 32 core, however not as much as the 2x price difference would have you believe.

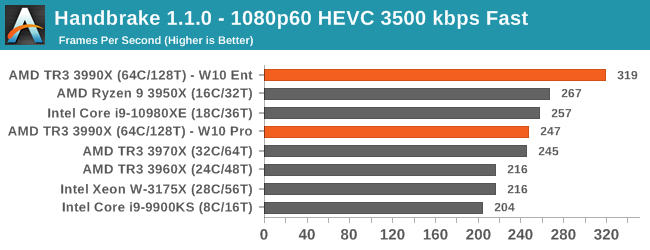

As we saw on the previous page, W10 Enterprise causes our Handbrake test to go way up, but on W10 Pro then the 3990X loses ground to the 3950X.

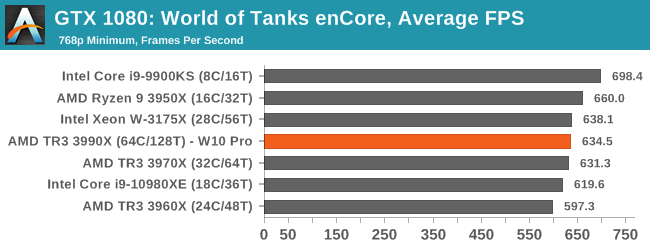

And how about a simple game test - we know 64 cores is overkill for games, so here's a CPU bount test. There's not a lot in it between the 3990X and the 3970X, but Intel's high frequency CPUs are the best here.

Verdict

There are a lot of situations where the jump from AMD's 32-core $1999 CPU, the 3970X, up to the 64-core $3990 CPU only gives the smallest tangible gain. That doesn't bode well. The benchmarks that do get the biggest gains however can get near perfect scaling, making the 3990X a fantastic upgrade. However those tests are few and far between. If these were the options, the smart money is on the 3970X, unless you can be absolutely clear that the software you run can benefit from the extra cores.

279 Comments

View All Comments

GreenReaper - Saturday, February 8, 2020 - link

64 sockets, 64 cores, 64 threads per CPU - x64 was never intended to surmount these limits. Heck, affinity groups were only introduced in Windows XP and Server 2003.Unfortunately they hardcoded the 64-CPU limit in by using a DWORD and had to add Processor Groups as a hack added in Win7/2008 R2 for the sake of a stable kernel API.

Linux's sched_setaffinity() had the foresight to use a length parameter and a pointer: https://www.linuxjournal.com/article/6799

I compile my kernels to support a specific number of CPUs, as there are costs to supporting more, albeit relatively small ones (it assumes that you might hot-add them).

Gonemad - Friday, February 7, 2020 - link

Seeing a $4k processor clubbing a $20k processor to death and take its lunch (in more than one metric) is priceless.If you know what you need, you can save 15 to 16 grand building an AMD machine, and that's incredible.

It shows how greedy and lazy Intel has become.

It may not be the best chip for, say, a gaming machine, but it can beat a 20-grand intel setup, and that ensures a spot for the chip, not being useless.

Khenglish - Friday, February 7, 2020 - link

I doubt that really anyone would practically want to do this, but in Windows 10 if you disable the GPU driver, games and benchmarks will be fully CPU software rendered. I'm curious how this 64 core beast performs as a GPU!Hulk - Friday, February 7, 2020 - link

Not very well. Modern GPU's have thousands of specialized processors.Kevin G - Friday, February 7, 2020 - link

The shaders themselves are remarkably programmable. The only thing really missing from them and more traditional CPU's in terms of capability is how they handle interrupts for IO. Otherwise they'd be functionally complete. Granted the per-thread performance would be abyssal compared to modern CPUs which are fully pipelined, OoO monsters. One other difference is that since GPU tasks are embarrassing parallel by nature, these shaders have hardware thread management to quickly switch between them and partition resources to achieve some fairly high utilization rates.The real specialization are in in the fixed function units for their TMUs and ROPs.

willis936 - Friday, February 7, 2020 - link

Will they really? I don’t think graphics APIs fall back on software rendering for most essential features.hansmuff - Friday, February 7, 2020 - link

That is incorrect. Software rendering is never done by Windows just because you don't have rendering hardware. Games no longer come with software renderers like they used to many, many moons ago.Khenglish - Friday, February 7, 2020 - link

I love how everyone had to jump in and said I was wrong without spending 30 seconds to disable their GPU driver and try it themselves and finding they are wrong.There's a lot of issues with the Win10 software renderer (full screen mode mostly broken, only DX11 seems supported), but it does work. My Ivy Bridge gets fully loaded at 70W+ just to pull off 7 fps at 640x480 in Unigine Heaven, but this is something you can do.

extide - Friday, February 7, 2020 - link

No -- the Windows UI will drop back to software mode but games have not included software renderers for ~two decades.FunBunny2 - Friday, February 7, 2020 - link

" games have not included software renderers for ~two decades."which is a deja vu experience: in the beginning DOS was a nice, benign, control program. then Lotus discovered that the only way to run 1-2-3 faster than molasses uphill in winter was to fiddle the hardware directly, which DOS was happy to let it do. it didn't take long for the evil folks to discover that they could too, and virus was born. one has to wonder how much exposure these latest GPU hardware present?